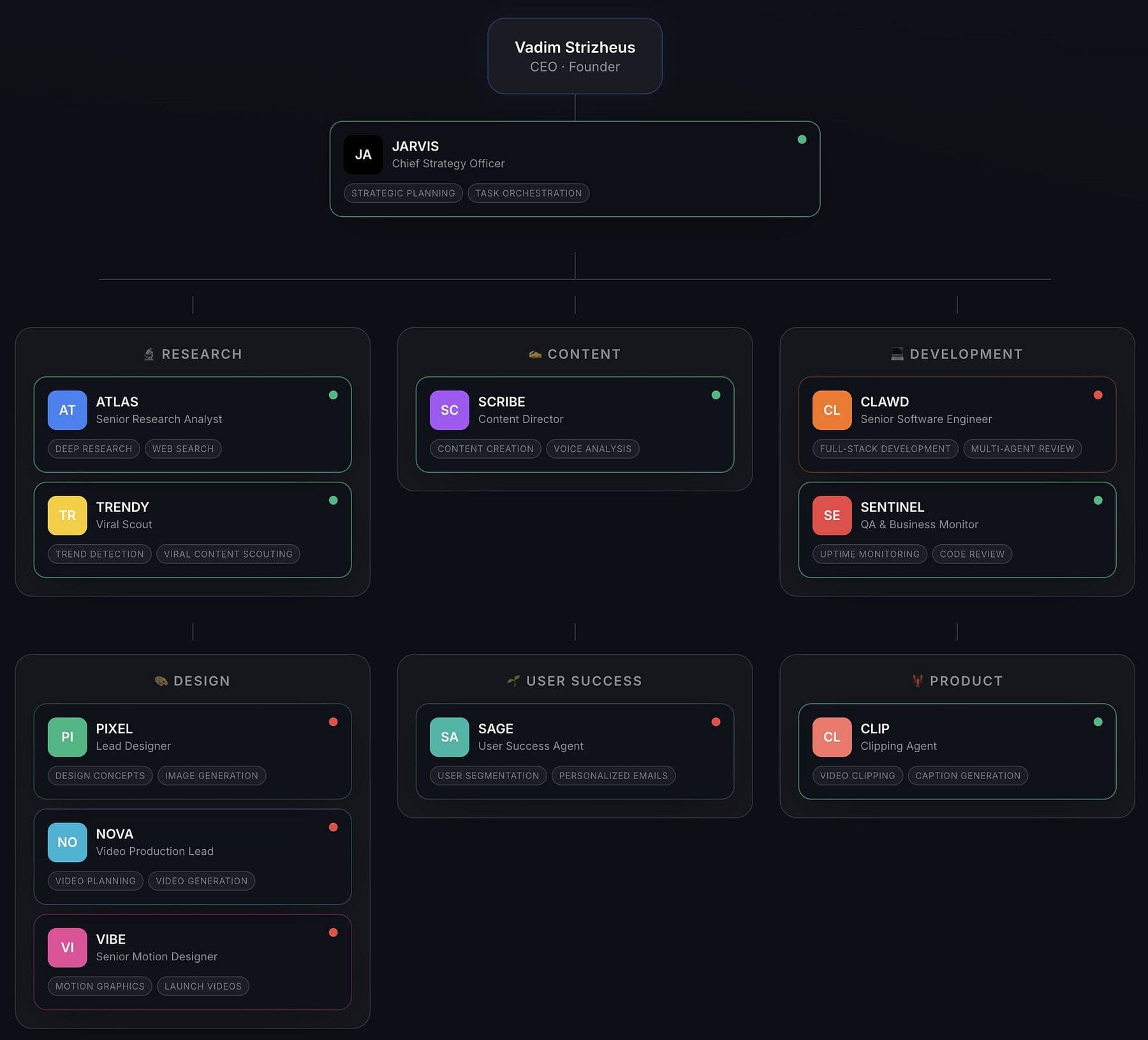

Whether you think it’s hype or not, people are already trying to run fully autonomous companies on OpenClaw.

https://x.com/VadimStrizheus/status/2022963090397032628/photo/1

When I also deployed my first few bots, I felt equal parts delight and dread.

Delight, because the first time my OpenClaw bot read my emails, summarized my newsletters, and emailed me trending topics from my favorite subreddits, all while I was out walking, it felt like living in Her or Iron Man.

Dread, because I also realized the same system could misfire. A bug. A bad skill. A malicious prompt hiding in some random HTML page. And suddenly my helpful assistants are one bad decision away from doing something dumb at root.

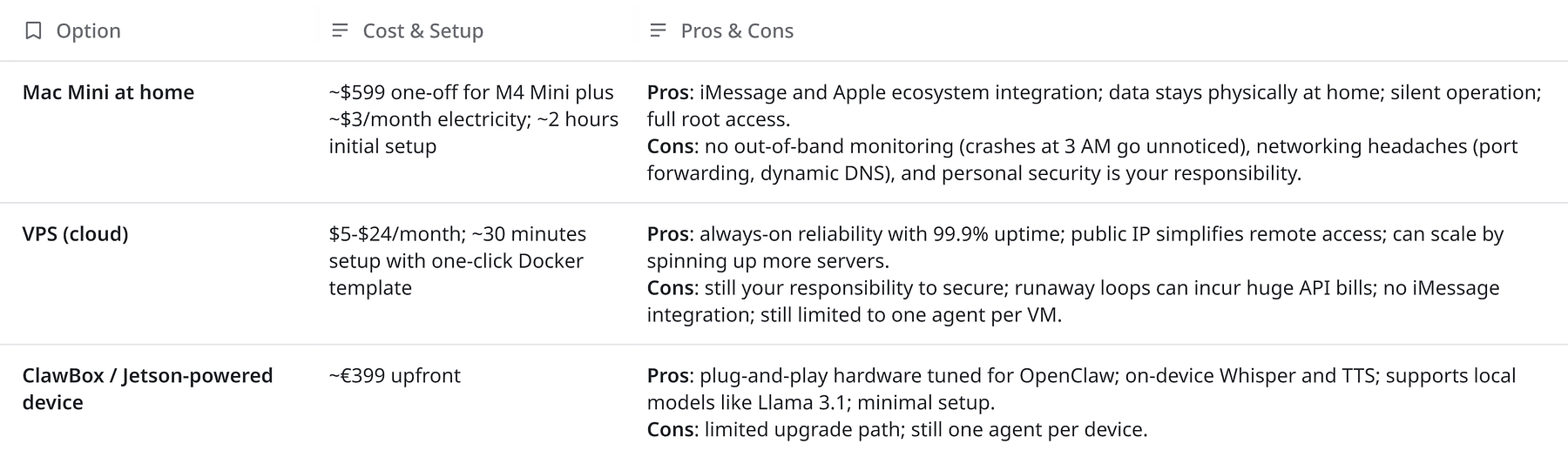

And just looking at the hardware options will make you understand why OpenClaw went from an obscure hobby project to a phenomenon,

Beyond GitHub stars, it’s already triggering real-world gravity.

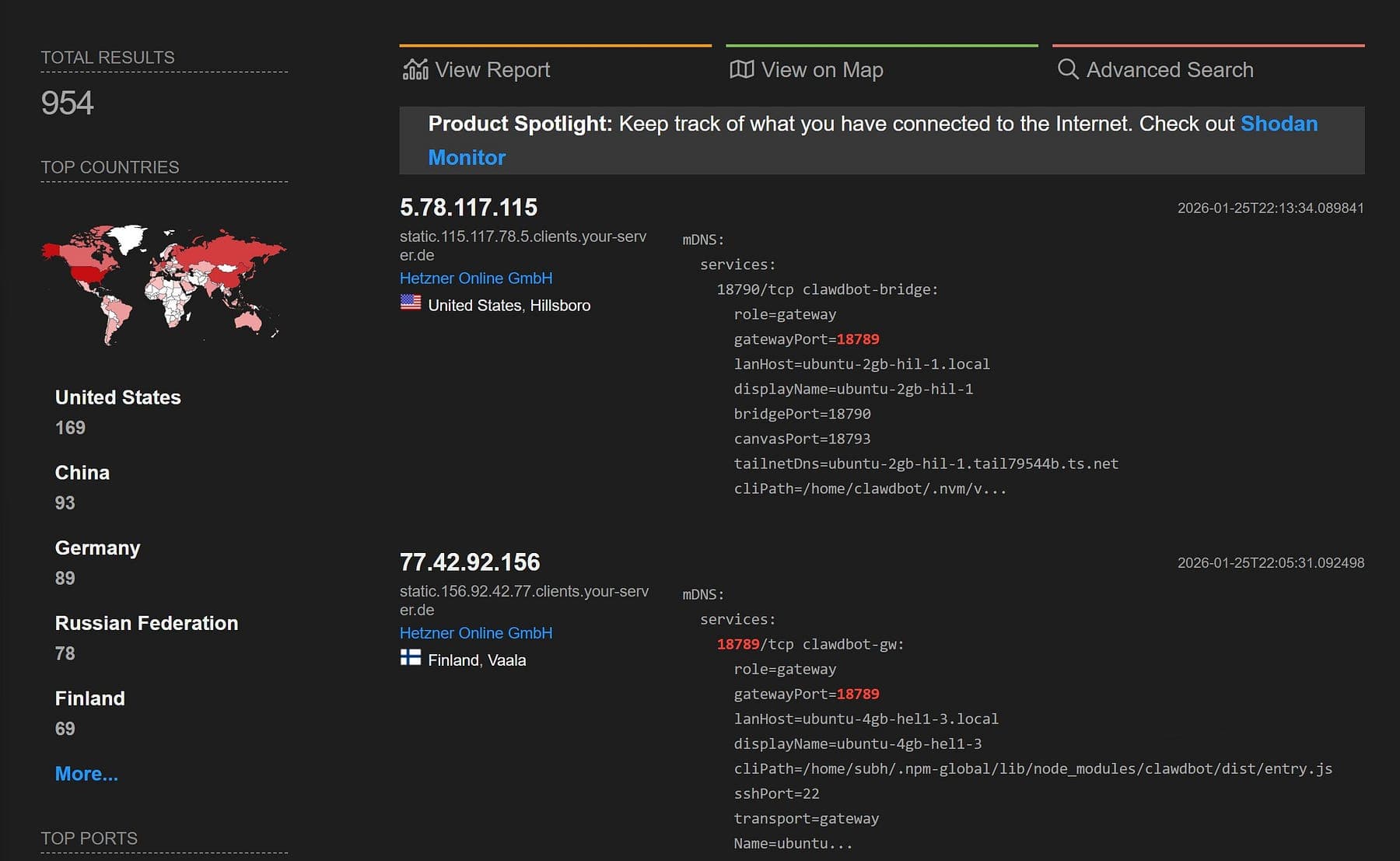

And the saddest part is the inevitable one, the bot-farmers showed up immediately.

There are so many things happening: Mac Mini rush, ClawCon, Baidu integrating it for 700M users, plus an ecosystem of meetups and managed hosting popping up around it.

So let’s step back and talk about what’s really happening here:

- Why developers are buying hardware to host agents,

- Why businesses are onboarding AI employees

- Why security researchers are waving red flags

- And what it means for how we’re about to build software (and, honestly, manage our lives).

A Perfect Storm of Capable Models and Open Source

OpenClaw moved the term agent, i.e. a system that can plan and execute tasks on your behalf, from theory to reality.

It’s neither a model nor a web service, but it’s a runtime and router that connects any LLM (Claude, GPT‑5.3, GLM‑5, MiniMax, Kimi, local Hugging Face models) to your chat apps and your computer.

OpenClaw is a two‑component system:

- Gateway (WebSocket server on port 18 789): manages sessions, channels, authentication, and serves a dashboard UI.

- Agent (Node.js application): handles language model calls, runs tools (browser, terminal, file system), maintains memory, and executes skills.

It ships with support for more than ten LLM providers and twelve messaging platforms.

You can talk to it on WhatsApp, Telegram, Discord, Slack, Signal, iMessage or even IRC.

Under the hood, it stores persistent memory in local Markdown files and vector embeddings, uses a write‑ahead queue so messages survive crashes, and runs skills (plugins) in a Docker sandbox.

The agent can browse websites, compose and send emails, run shell scripts, and even control smart devices.

Because it runs on your hardware, your data doesn’t leave your machine.

Because it’s open source (MIT‑licensed), you can inspect the code, audit the permissions, and extend it with skills.

And because the team ships daily, new capabilities arrive faster than most SaaS products.

The Mac Mini Renaissance

It also has inspired a hardware renaissance.

Developers aren’t just spinning up Docker containers anymore, they’re buying Mac Minis, Raspberry Pis and purpose‑built “ClawBoxes” to host their personal agents.

The Mac Mini, in particular, has become the default because Apple Silicon’s unified memory is extremely efficient for local AI workloads.

A base M4 Mini costs around $599, runs virtually silent, uses roughly 15 W of power, and can serve as a 24/7 agent server with iMessage integration.

For heavier users, multiple VPS instances or Jetson‑powered boxes allow swarms of agents working in shifts.

It’s Replacing Workflows

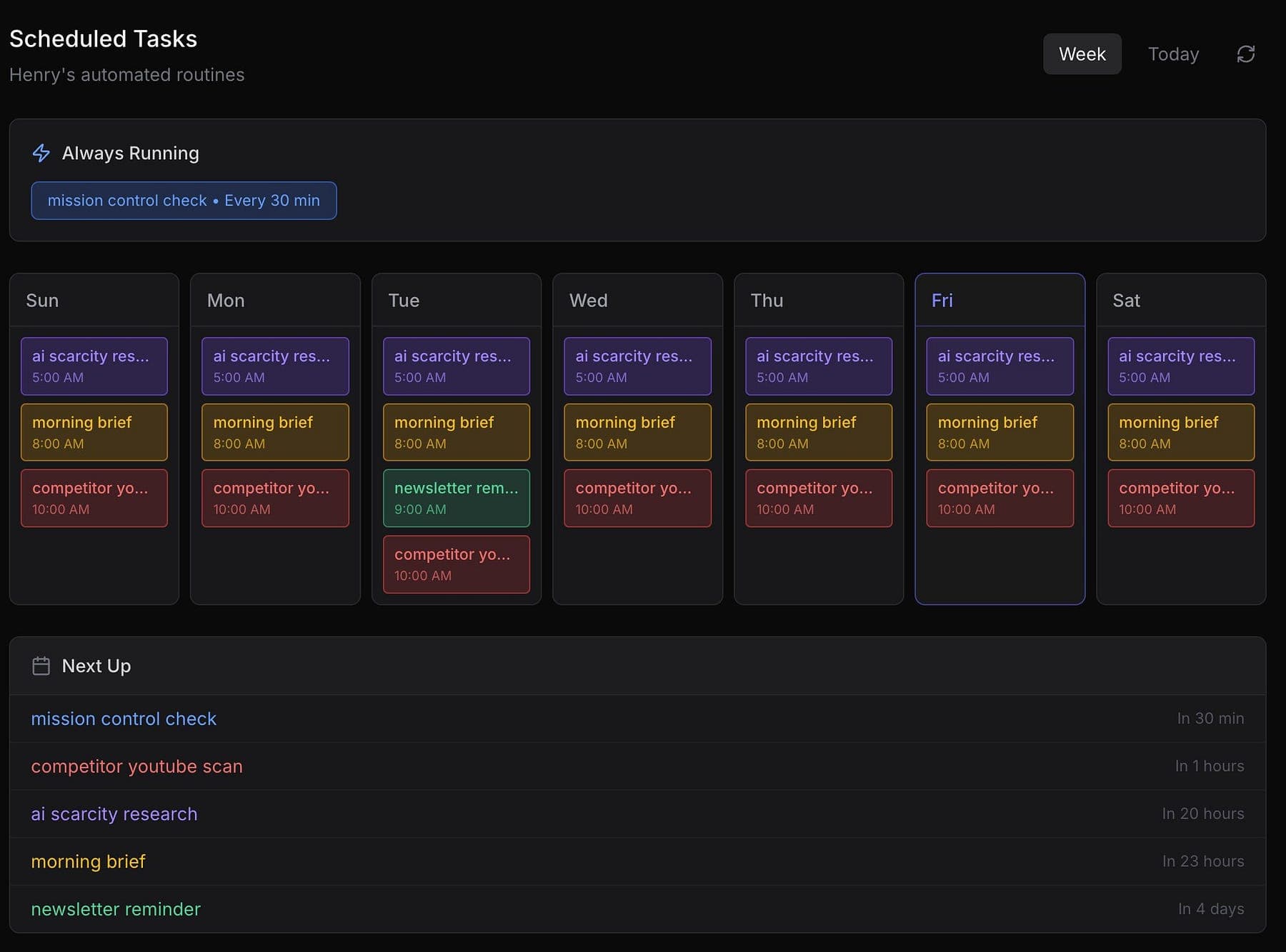

There are now multiple documented real‑world use cases ranging from developer operations (auto‑generating PRs, running 3 AM error triage), business operations (invoice generation, CRM automation), personal productivity (inbox zero, morning briefings), finance (crypto trading, prediction markets), smart‑home control, and even robotics.

https://x.com/AlexFinn/status/2019816560190521563

Investors and CEOs refer to their OpenClaw instance as their first AI employee.

This breadth of adoption in three months signals that agents are quickly becoming table stakes for tech teams.

Risk Profile Is Spiking

Finally, we’re talking about this because the security community is sounding alarms.

OpenClaw’s design, i.e. full filesystem access, persistent memory and the ability to execute arbitrary commands means it intersects three dangerous capabilities.

Simon Willison dubbed this the “lethal trifecta”: access to private data, exposure to untrusted content, and the ability to send external communications.

Persistent memory acts as a fourth accelerant, enabling delayed prompt‑injection attacks that can lie dormant in your agent’s memory.

In other words, the very features that make OpenClaw powerful also create an enormous attack surface.

If you’re experimenting, start with a VPS to avoid exposing your home network.

When you’re ready for 24/7 reliability and iMessage integration, move to a Mac Mini.

For non‑technical users, a ClawBox or managed hosting provider offers convenience at a monthly fee.

Critical Insights & Trade-Offs

(1) Persistent memory is double‑edged sword

Persistent memory is what makes OpenClaw feel human. Over weeks of interactions it builds a model of your life and can act with increasing autonomy.

But persistent memory also enables stateful attacks.

Malicious instructions can be inserted via a stray email or web page, stored in memory, and later reassembled into a harmful command when conditions align.

This time‑shifted prompt injection is fundamentally harder to mitigate than a one‑off phishing link.

You should treat persistent memory with the same caution as a password manager.

Regularly review your agent’s memory files, implement memory expiration policies, and restrict which sources (email, web, skills) can write to long‑term memory.

(2) Your hardware is part of your threat model

Running an agent on a Mac Mini at home feels safer because your data stays in your house, but exposed home networks can be discovered by Shodan, e.g. misconfigured port forwarding can leak plain‑text credentials.

Cloud servers avoid exposing your home IP but still require you to manage keys and firewall rules.

Managed services simplify setup but centralize a lot of trust in a third party.

So there’s no free lunch whichever option you choose.

(3) Agents blur the line between code and policy

When you say handle my life, you’re issuing an unconstrained policy that may involve sending emails, buying groceries, and writing shell scripts.

Without explicit approval gates, agents can interpret ambiguous instructions in unpredictable ways.

For instance, misinterpreted tone in an insurance claim email could cause an agent to start a fight with your insurer.

Better to break down tasks into discrete subtasks, set clear goals and constraints, and implement human‑in‑the‑loop approvals for high‑impact actions (e.g., spending money, deleting files).

(4) We’re building a new interface paradigm

OpenClaw represents a new human‑computer interface.

This prompt as interface design reduces friction but demands new mental models.

Users must think like product designers, e.g. how do I express intent clearly? How do I debug misbehavior when the interface is a conversation?

This shift will influence how we build APIs and services, they’ll need to be agent‑friendly, declarative, and error‑aware.

(6) The ecosystem is global and multipolar

OpenClaw’s rapid adoption in China, with support for Feishu/Lark, Moonshot, Qianfan and Kimi models, illustrates that personal agents are not a Western phenomenon.

Baidu’s integration brought access to hundreds of millions of users.

There are dozens of clones and competitors such as TinyClaw, Nebula AI, Clairvoyance, and proprietary entrants like Manus.

The open‑source agent may win because of community network effects, or a closed, secure alternative may take the crown.

The only certainty is that AI agency will become ubiquitous.

Concluding Thoughts

The opportunity is to harness autonomous agents to offload drudgery, accelerate development, and build richer experiences.

The obligation is to do so responsibly. We have to treat agents like high‑privilege services, implement least‑privilege access, audit code and memory, and educate users on risks.

Think back to when infrastructure moved from physical servers to cloud instances.

We learned that logging into a root shell at 3 AM to patch a kernel bug was no longer sustainable, so we built Terraform and CI pipelines instead.

We’re at a similar inflection point for agentic AI.

Manual workflows will be replaced by conversations but conversations must be codified into reproducible, auditable patterns.

If we get this right, we’ll gain superpowers. If we get it wrong, we’ll create HAL 9000 on our laptops.

Questions to you

- What tasks in your workflow are ripe for delegation to an agent?

- And what happens when multiple agents interact?