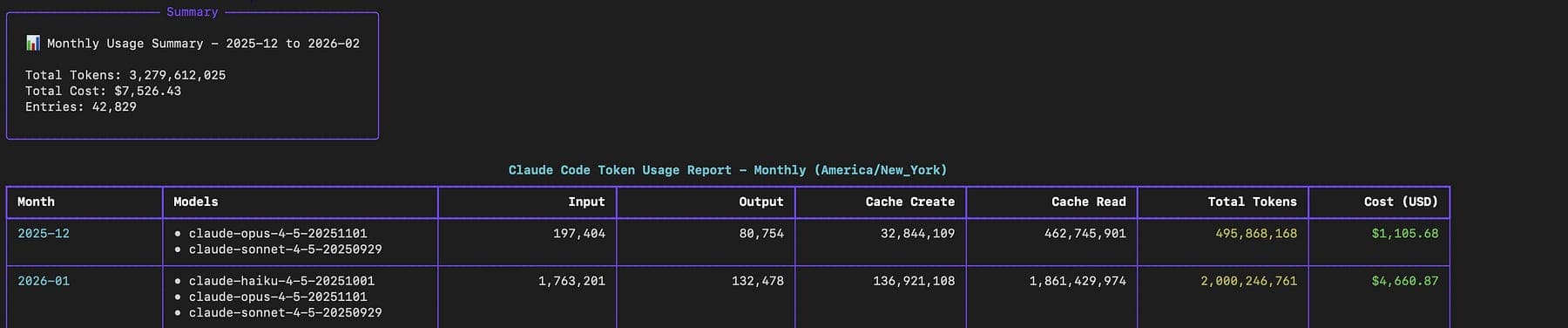

Last month I opened my credit‑card statement and almost threw up. Anthropic charged me $4,660.87, just for Claude.

I use OpenClaw every day, but I never stopped to think about what I was actually paying for.

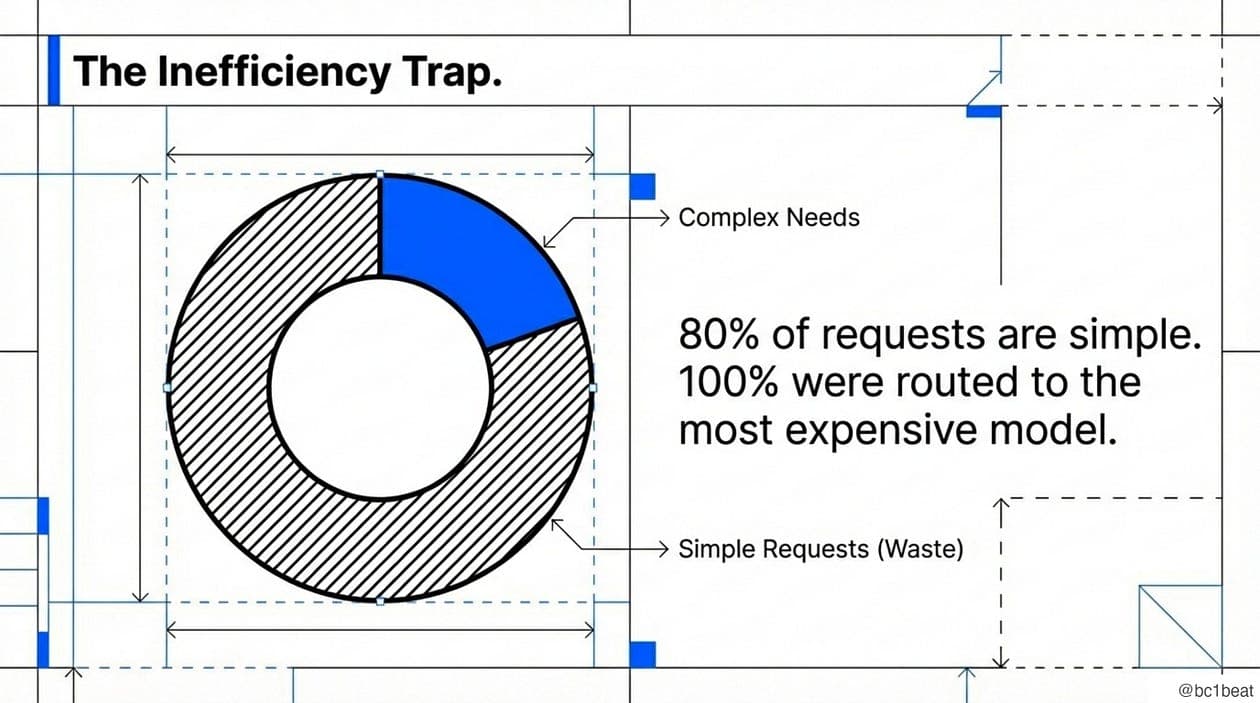

Most requests, no matter how simple, were routed to the Claude Opus model at $75 per million tokens.

If you don’t have a Medium membership, you can read the article here.

It was like taking a Ferrari to the grocery store.

Most of my requests are simple. Autocomplete this line. Explain this error. Fix this syntax. Maybe twenty percent of what I do actually requires a powerful model.

I don’t know when requests were routed to the most expensive model. Because switching models manually is annoying, I just left everything on Opus and hemorrhaged money.

This realization kept me up at night. So I built something to fix it.

This is a guest post written by bc1beat and it deep dives into smart LLM routing for agentic applications. We have no affiliation with ClawRouter, aside from introducing it to developers building multi-agent systems with OpenClaw. Enjoy the read and drop your questions in the comments.

Getting started with ClawRouter

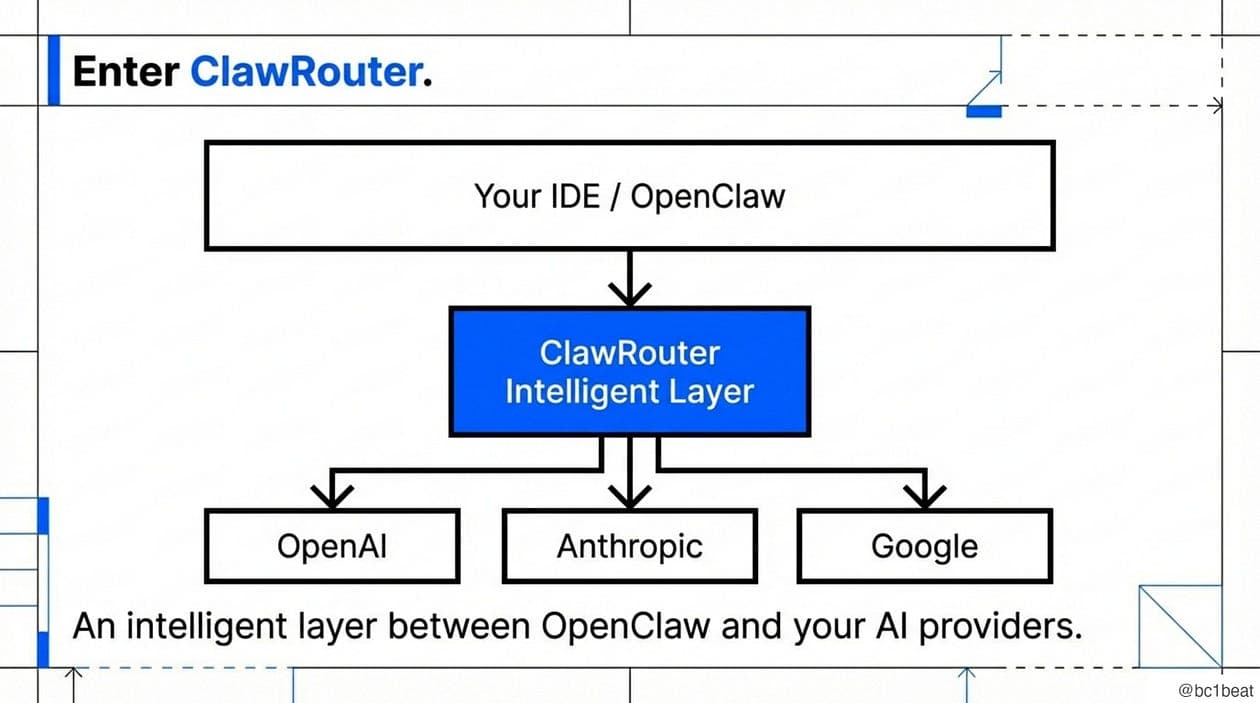

ClawRouter is a smart, local router that sits between OpenClaw (or any OpenAI‑compatible client) and the AI providers.

Every time you send a request, it looks at what you’re asking and automatically picks the cheapest model that can handle it.

A typical workflow looks like this:

The routing decision happens locally on your machine.

There are no API calls involved and no added latency, the scoring runs in under 1ms.

To start saving money, install the plugin, fund your wallet and enable the smart model:

1. Install: auto-generates a wallet on Base

openclaw plugins install @blockrun/clawrouter2. Fund your wallet with USDC on Base (address printed on install)

$5 is enough for thousands of requests

3. Restart OpenClaw to load the plugin

openclaw restartEvery request now routes through BlockRun with x402 micropayments.

x402 is a new open payment protocol developed by Coinbase that enables instant, automatic stablecoin payments directly over HTTP.By reviving the HTTP 402 Payment Required status code, x402 lets services monetize APIs and digital content onchain, allowing clients, both human and machine, to programmatically pay for access without accounts, sessions, or complex authentication.

To enable smart routing, add to ~/.openclaw/openclaw.json:

{

"agents": {

"defaults": {

"model": {

"primary": "blockrun/auto"

}

}

}

}Or use /model blockrun/auto in any conversation to switch on the fly.

Already have a funded wallet?

You can export BLOCKRUN_WALLET_KEY=0x... and if you want to use a specific model, you can use blockrun/openai/gpt-4o or blockrun/anthropic/claude-sonnet-4, and till get x402 payments and usage logging.

Let’s see ClawRouter in action:

ClawRouter in action

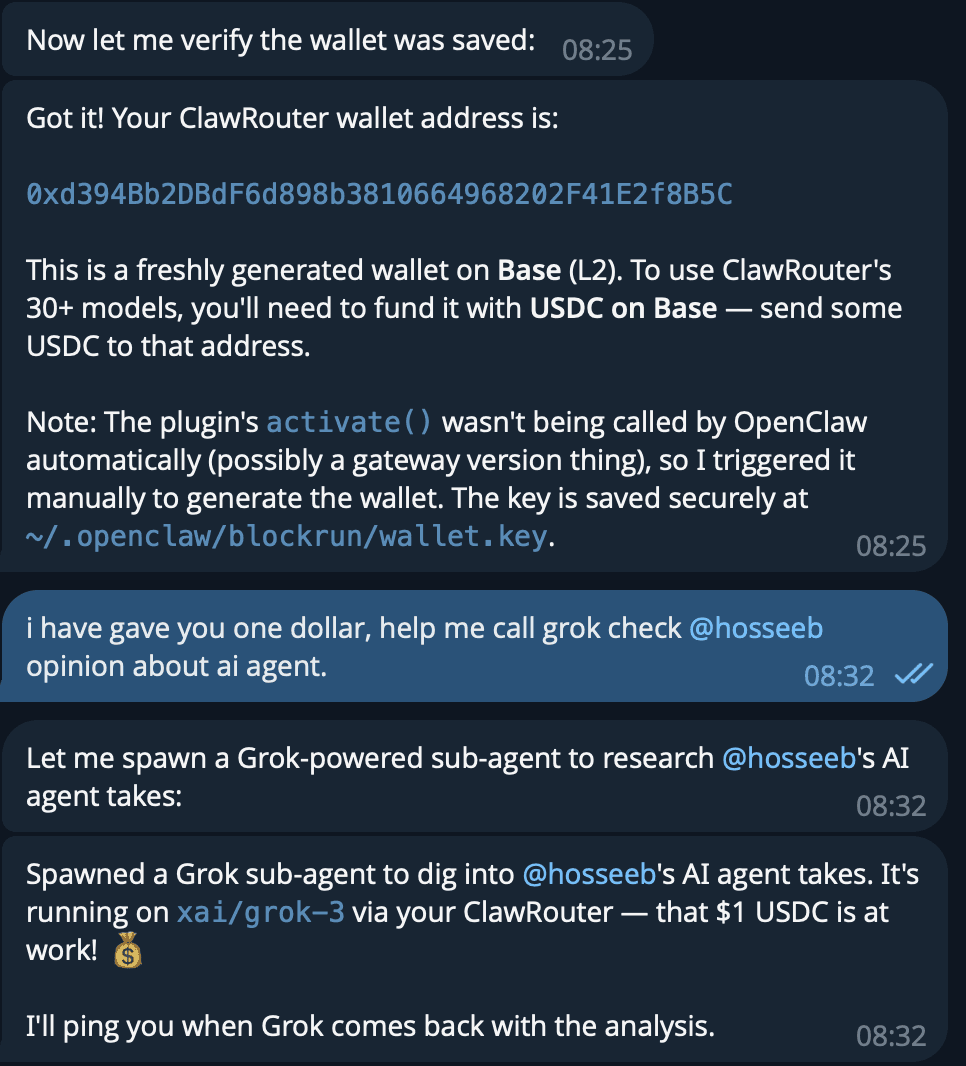

The flow:

- Wallet auto-generated on Base (L2) and saved securely at

~/.openclaw/blockrun/wallet.key - Fund with $1 USDC, which is enough for hundreds of requests

- Request any model, e.g. “help me call Grok to check @hosseeb’s opinion on AI agents”

- ClawRouter routes it, e.g. spawns a Grok sub-agent via

xai/grok-3, pays per-request

Architecture: local, open, and agent‑friendly

ClawRouter’s architecture is beautifully simple:

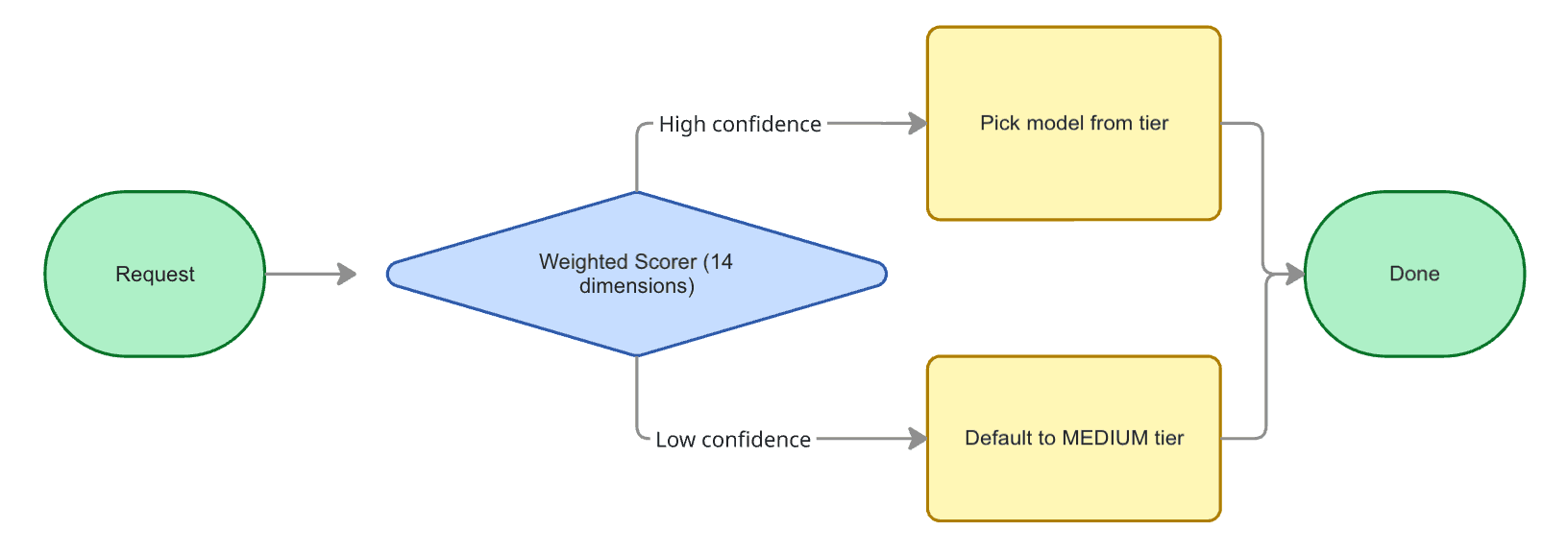

(1) Weighted Scorer: a rule‑based classifier looks at your prompt (and optional system prompt) across 14 dimensions such as reasoning markers, code presence, simple vs complex indicators, token length, technical terms, imperative verbs, constraints, output format, domain specificity, and more.

Each dimension is weighted, and the weighted sum goes through a sigmoid function to produce a confidence score and a tier (SIMPLE, MEDIUM, COMPLEX or REASONING).

If two or more reasoning markers are present it jumps straight to the REASONING tier.

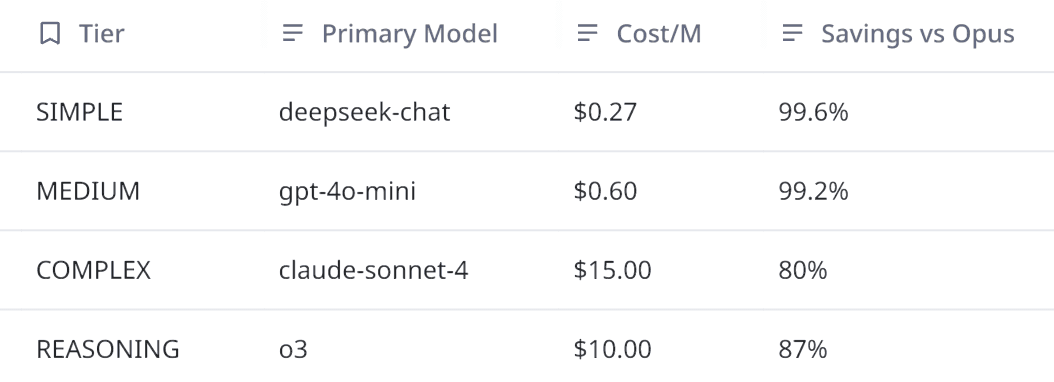

(2) Model Selector: given a tier and confidence, the selector maps to the cheapest capable model. It also computes the estimated cost and the savings compared to a baseline of Claude Opus.

(3) x402 Signer: requests to BlockRun’s API go through a local proxy that implements the x402 micropayment protocol. Payment is authentication: your wallet signs the cost (displayed in the 402 header), and the request continues. There are no API keys, no accounts or no custodial balances; the USDC stays in your wallet until spent.

Because routing and payment happen locally, privacy and latency are preserved.

The proxy includes clever optimizations: response deduplication (30s cache), streaming heartbeats to prevent upstream timeouts, and payment pre‑authorization that skips extra round trips.

How the scoring works

The heart of ClawRouter is the 14‑dimension weighted scorer.

Here are a few highlights from src/router/rules.ts:

- Token count: short prompts (<\50 tokens) push the score towards the SIMPLE tier while long prompts (>500 tokens) push towards COMPLEX.

- Keyword matches: lists of reasoning words (e.g. prove, theorem, step by step) contribute up to +1.0 to the score; code keywords (function, import, backticks) contribute up to +1.0; simple indicators (what is, define) actually subtract, pushing you to the SIMPLE tier.

- Multi‑step patterns and question complexity: multiple steps or several question marks indicate more reasoning.

- Imperative verbs, constraints, output formats: these incremental features help differentiate instructions that ask for code generation or structured output.

- Domain specificity and negation complexity: detect specialized domains (quantum, genomics) or multiple negations, raising the tier when necessary.

The weighted sum is calibrated via a sigmoid.

If the confidence is below a configurable threshold, the router falls back to the MEDIUM tier (DeepSeek/GPT‑4o‑mini).

Overriding thresholds or adding custom keywords is as simple as editing your openclaw.yaml:

# Override tier models

plugins:

- id: "@blockrun/clawrouter"

config:

routing:

tiers:

COMPLEX:

primary: "openai/gpt-4o"

SIMPLE:

primary: "google/gemini-2.5-flash"

# Override scoring weights

routing:

scoring:

reasoningKeywords: ["proof", "theorem", "formal verification"]

codeKeywords: ["function", "class", "async", "await"]Single agent usage: saving money with zero friction

For most developers, the easiest way to adopt ClawRouter is via the OpenClaw plugin:

- Installation and wallet creation: running

openclaw plugins install @blockrun/clawrouterautomatically creates a wallet in~/.openclaw/blockrun/wallet.keyand prints its address. Fund it with a small amount of USDC on Base and you’re ready. - Smart routing: set

blockrun/autoas your default model. From this point on, every request you send (via OpenClaw CLI, your localopenaiPython library, or any other client pointed at the ClawRouter proxy) will automatically go to the right model. - Logging: usage is logged as JSON lines in

~/.openclaw/blockrun/logs/, so you can audit costs per request.

Here’s a minimal TypeScript example without OpenClaw:

import { startProxy } from "@blockrun/clawrouter";

const proxy = await startProxy({

walletKey: process.env.BLOCKRUN_WALLET_KEY!,

onReady: (port) => console.log(`Proxy on port ${port}`),

onRouted: (d) => console.log(`${d.model} saved ${(d.savings * 100).toFixed(0)}%`),

});// Any OpenAI‑compatible client works

const res = await fetch(`${proxy.baseUrl}/v1/chat/completions`, {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

model: "blockrun/auto",

messages: [{ role: "user", content: "What is 2+2?" }],

}),

});

console.log(await res.json());

await proxy.close();The proxy inspects the prompt, selects the cheapest model, signs the micropayment, forwards the request and returns the result.

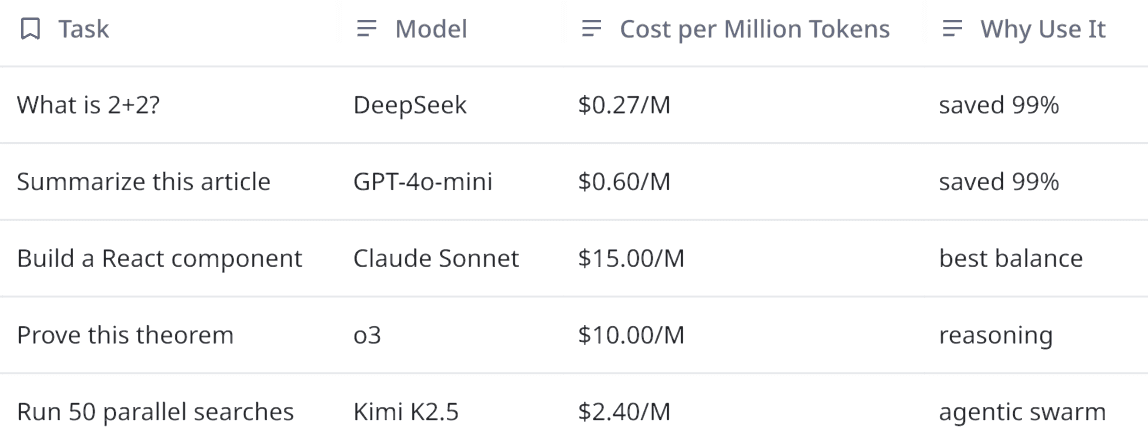

In my own tests, trivial prompts (e.g. “Summarize this article.”) are routed to gpt‑4o‑mini at $0.60/M tokens, while deep reasoning (e.g. “Prove that √2 is irrational.”) goes to o3 or Claude Sonnet.

Observability and budgets

ClawRouter exposes a local health endpoint at /health that returns wallet status and optionally your USDC balance.

You can hook into onLowBalance and onInsufficientFunds callbacks to warn or halt operations. The roadmap includes spend controls and an analytics dashboard.

Multi-agent patterns: orchestrating swarms without breaking the bank

Agentic frameworks (e.g. OpenClaw, CrewAI, AutoGPT) often spawn multiple sub‑agents to solve complex tasks.

Without routing, each sub‑agent defaults to the most expensive model, quickly burning your budget.

ClawRouter’s design makes it ideal for multi‑agent workflows:

- Decentralized budgeting: each agent can use the same wallet or its own wallet, paying per request via x402. There’s no need to share API keys or centralize credits.

- Tier‑based routing: sub‑agents performing simple tasks (search, summarization, code formatting) get routed to cheap models, while specialized agents (reasoning, research) are escalated to higher tiers.

- Parallel concurrency: models like Kimi K2.5 (Moonshot AI) are optimized for agent swarms, supporting up to 100 parallel agents and stable tool chains over hundreds of calls. By combining Kimi with ClawRouter’s scoring, you can orchestrate large swarms cheaply and reliably.

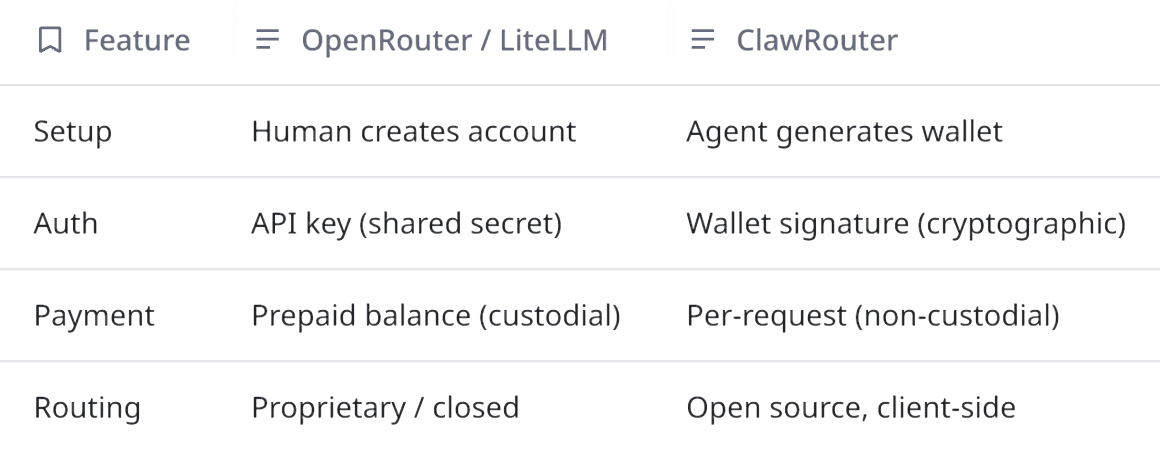

Why Not OpenRouter / LiteLLM?

They’re built for developers. ClawRouter is built for agents.

Agents shouldn’t need a human to paste API keys. They should generate a wallet, receive funds, and pay per request programmatically.

Example: multi‑agent research assistant

Suppose you’re building a research assistant that needs to gather background, summarize papers and derive insights.

You can create sub‑agents:

- Web scraper: fetches pages, extracts text. Prompts are simple; routed to

deepseek-chatat $0.27/M. - Summarizer: condenses long articles; routed to

gpt-4o-mini. - Synthesizer: draws connections and formulates insights; may trigger

claude-sonnet-4. - Fact checker: uses search and chain‑of‑thought; may require

o3or Opus.

Agents interact via messages passed through a coordinator.

Each call to blockrun/auto returns the chosen model, cost estimate and savings, which you can log.

Because routing is local, there is no additional latency, crucial for swarms of dozens of agents.

Below is a pseudocode snippet using a hypothetical multi‑agent framework:

from my_agents import WebScraper, Summarizer, Synthesizer, FactChecker

from openai import OpenAIConfigure OpenAI client to point at local ClawRouter proxy

oi = OpenAI(base_url="http://127.0.0.1:8402/v1", api_key="")Each agent uses the auto model

scraper = WebScraper(client=oi, model="blockrun/auto")

summarizer = Summarizer(client=oi, model="blockrun/auto")

synthesizer = Synthesizer(client=oi, model="blockrun/auto")

fact_checker = FactChecker(client=oi, model="blockrun/auto")Orchestrate tasks

articles = scraper.scrape("topic: agentic AI")

summaries = [summarizer.summarize(a) for a in articles]

draft = synthesizer.synthesize(summaries)

verified = fact_checker.verify(draft)

print(verified)Each call will automatically choose the cheapest model appropriate for the prompt.

You can introspect routing decisions via the callback onRouted to see savings and adjust your agent’s strategy if certain calls consistently trigger expensive models.

Patterns and insights

- Chunk your prompts: smaller prompts often stay in the SIMPLE tier, so break large documents into chunks before summarizing. This helps routing stay cheap.

- Explicit constraints: if your agent cares about output format (JSON/YAML), include it in the prompt. The scoring logic detects output format keywords and may route to models better at structured output (e.g. GPT‑4o vs DeepSeek).

- Override when necessary: for deterministic evaluation or benchmarking, override the selected model by specifying

openai/gpt-4oor another id in the request. You still benefit from x402 and logging. - Monitor balances: use

onLowBalanceandonInsufficientFundsto gracefully degrade your agent’s capabilities when funds are low: skip optional tasks or route everything to a lower tier. - Use fallback chains: ClawRouter supports cascade routing (planned). For now, you can manually implement fallback: call

blockrun/auto, check if the response quality is sufficient, and if not, re‑issue the prompt withclaude-sonnet-4.

Beyond the router: ecosystem and roadmap

ClawRouter is part of BlockRun’s pay‑per‑request AI infrastructure.

Together with OpenClaw, it aims to make agents autonomous in both reasoning and billing.

The roadmap includes cascade routing, spend controls and an analytics dashboard.

For developers building agentic applications, this translates to:

- Fine‑grained cost controls and budgets per agent or project.

- Unified access to 30+ models across OpenAI, Anthropic, Google, DeepSeek, xAI, and Moonshot.

- Transparent, open‑source routing logic that you can extend or embed in your own tools.

Thoughts

The router’s local‑first philosophy means privacy, speed and flexibility are in your hands.

If you build agents or any application that invokes LLMs, give ClawRouter a try.

You might be surprised at how much efficiency you can unlock when the right tool is picked for every job.