How to Deploy AI Agents

1. VPS Setup

Hetzner Cloud (same process for any provider)

Start here first

Before touching OpenClaw, you need a server. This section walks through provisioning a VPS on Hetzner Cloud (one of the best price-to-performance options in Europe) from account login to your first SSH session.

Why a VPS instead of running locally?

Running AI agents on your laptop works for quick experiments, but falls apart in production. Your laptop sleeps, loses Wi-Fi, and shares resources with everything else you're doing. A VPS gives you a dedicated machine that's always on, always reachable, and isolated from your daily workflow. It's also the only sane way to expose messaging integrations (Telegram, WhatsApp) that need a persistent connection.

More importantly, a VPS behind Tailscale (which we'll set up in the next section) means your agent infrastructure is invisible on the public internet, no open ports for bots to scan, no brute-force attempts filling your logs.

Why Hetzner?

Hetzner's CX23 plan gives you 2 vCPUs, 4 GB RAM, 40 GB NVMe, and 20 TB of traffic for €3.49/month (about $4). That's roughly 3-5x cheaper than equivalent specs on AWS, GCP, or DigitalOcean. Billing is hourly with a monthly cap, so you can spin up a server, test for a few hours, and destroy it for pennies. Datacenters are in Germany and Finland (with US locations available), which keeps latency low for European users and respects EU data residency requirements by default.

For OpenClaw with cloud-hosted models (Anthropic, OpenAI), the CX23 is more than sufficient. If you plan to run local models through Ollama, you'll want to step up to a CX33 (4 vCPUs, 8 GB RAM) or higher, more on that in the OpenClaw install section.

Step 1: Log in to Hetzner Cloud

Head to console.hetzner.cloud and sign in. If you don't have an account, registration takes about two minutes. Hetzner may ask for ID verification for new accounts, this is normal and usually completes within a few hours.

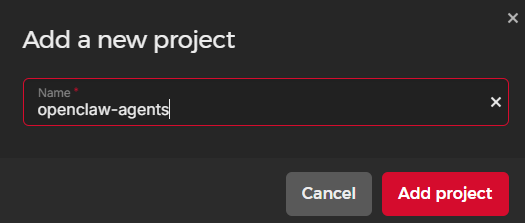

Step 2: Create a new project

Projects in Hetzner are organizational containers, think of them like folders. Create one called something descriptive (e.g., "ai-agents" or "openclaw-prod"). This keeps your agent infrastructure separate from any other servers you might spin up later.

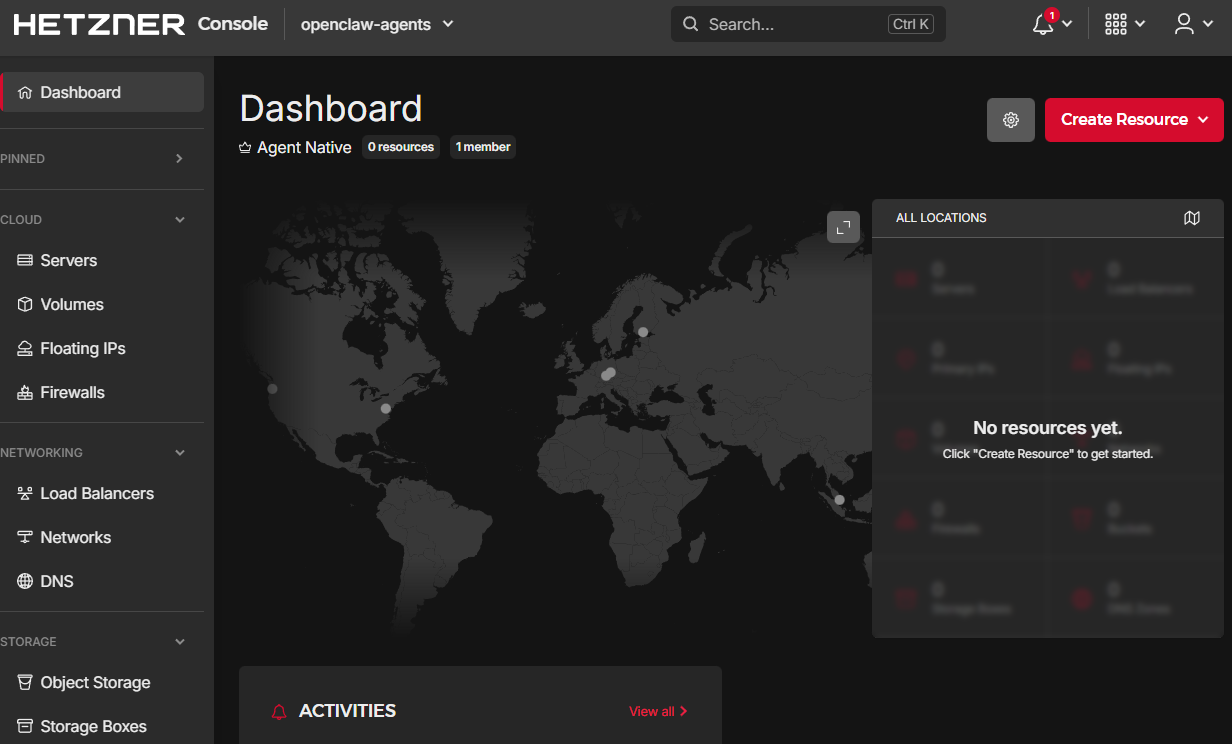

Here's your project dashboard where you'll manage all resources:

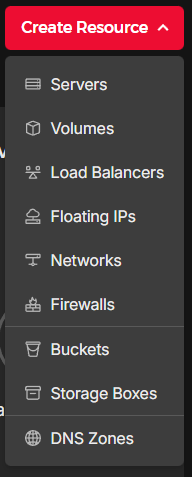

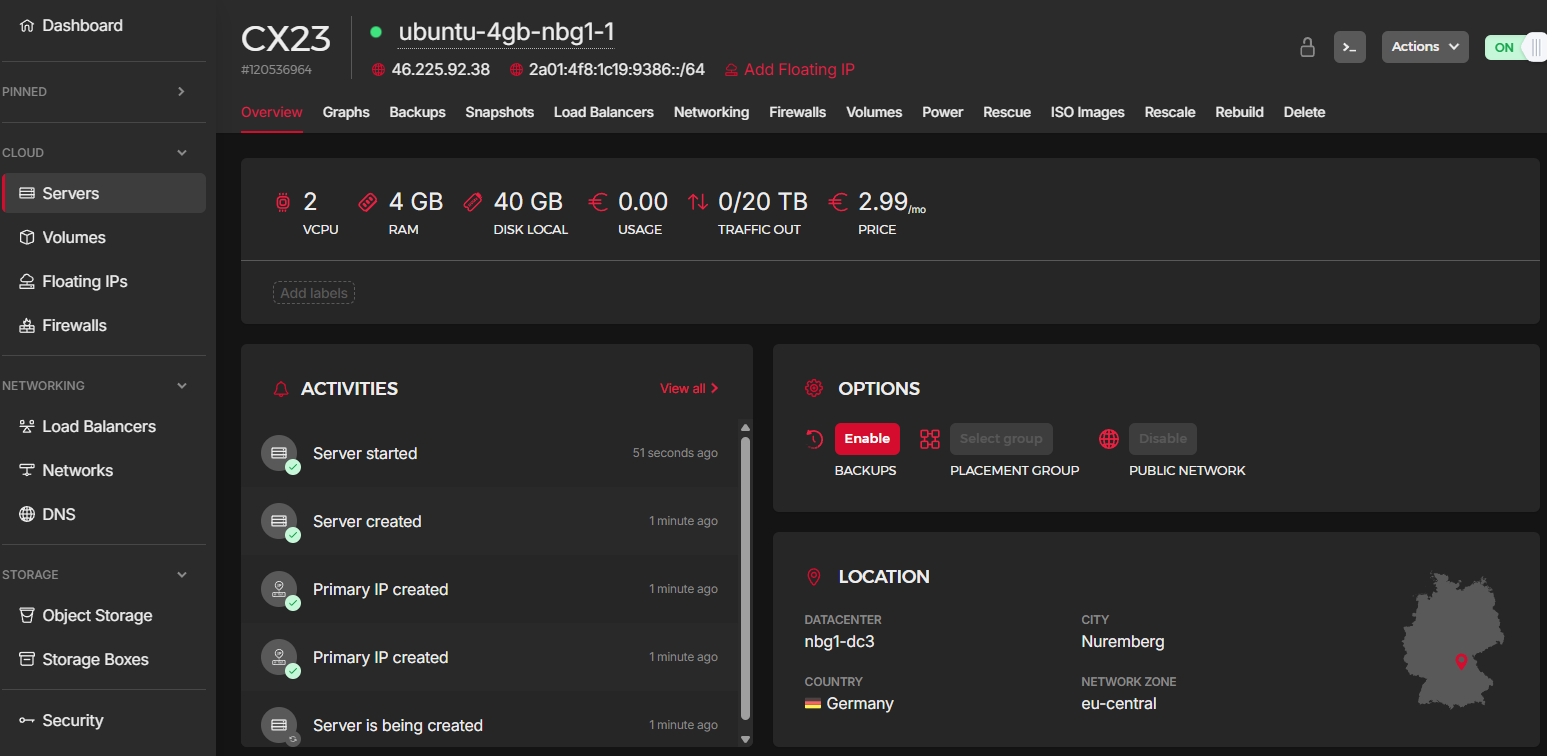

Step 3: Create a new server

Click Create New Resource → Server. This is where you configure the machine specs. Here's what to select:

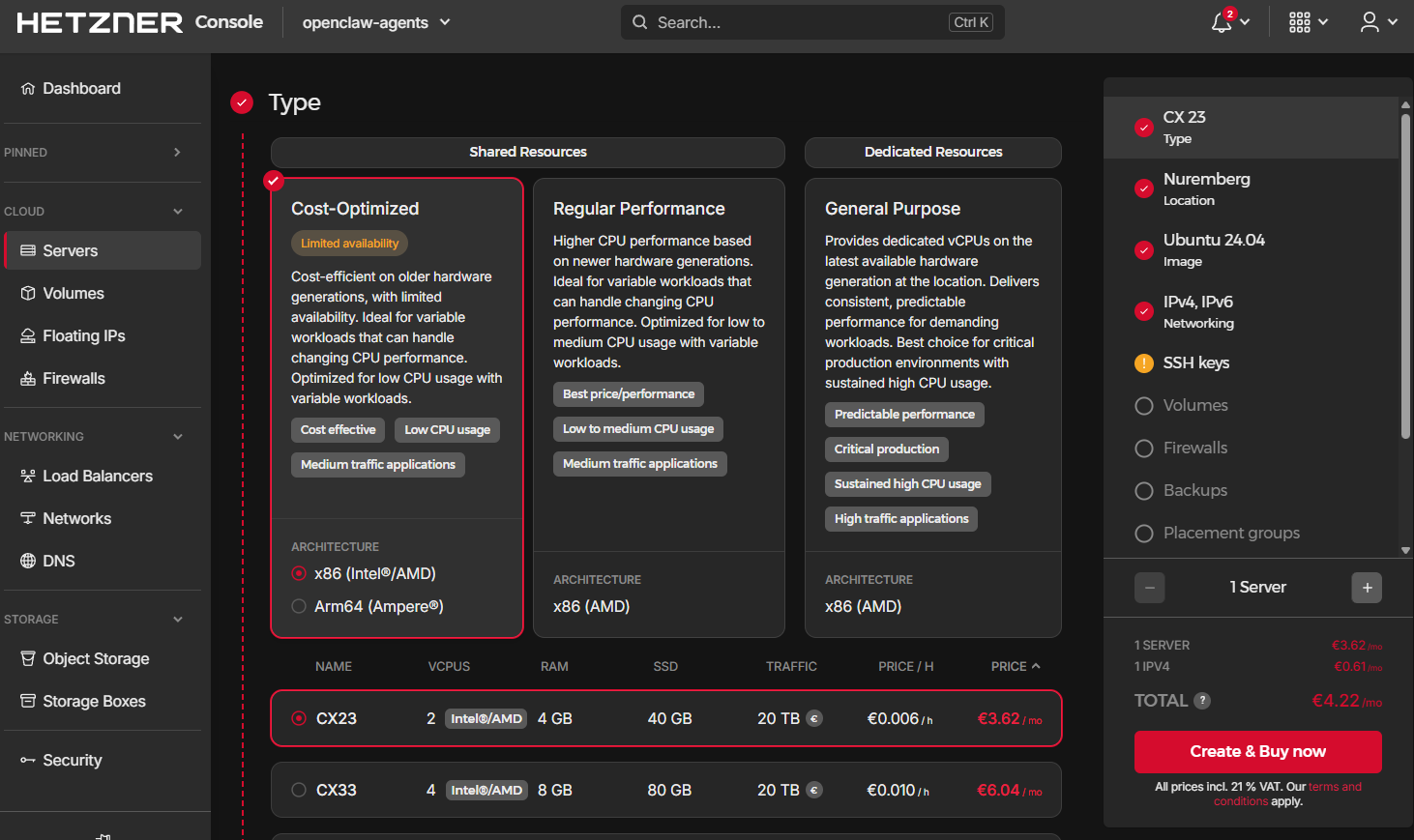

Step 4: Choose server type

Select Shared vCPU under the server type. This is the cost-optimized tier where CPU cores are shared across tenants, perfectly fine for an agent gateway that spends most of its time waiting on API responses rather than crunching data locally.

Pick CX23 as the plan (2 vCPUs, 4 GB RAM, 40 GB NVMe). For reference, here's how the cost-optimized line scales if you need more later:

- CX23: 2 vCPUs, 4 GB RAM, 40 GB NVMe for €3.49/month

- CX33: 4 vCPUs, 8 GB RAM, 80 GB NVMe for €5.49/month

- CX43: 8 vCPUs, 16 GB RAM, 160 GB NVMe for €12.49/month

- CX53: 16 vCPUs, 32 GB RAM, 320 GB NVMe for €24.49/month

You can resize between these tiers later without losing data, Hetzner handles the migration. Start small, scale when you need to.

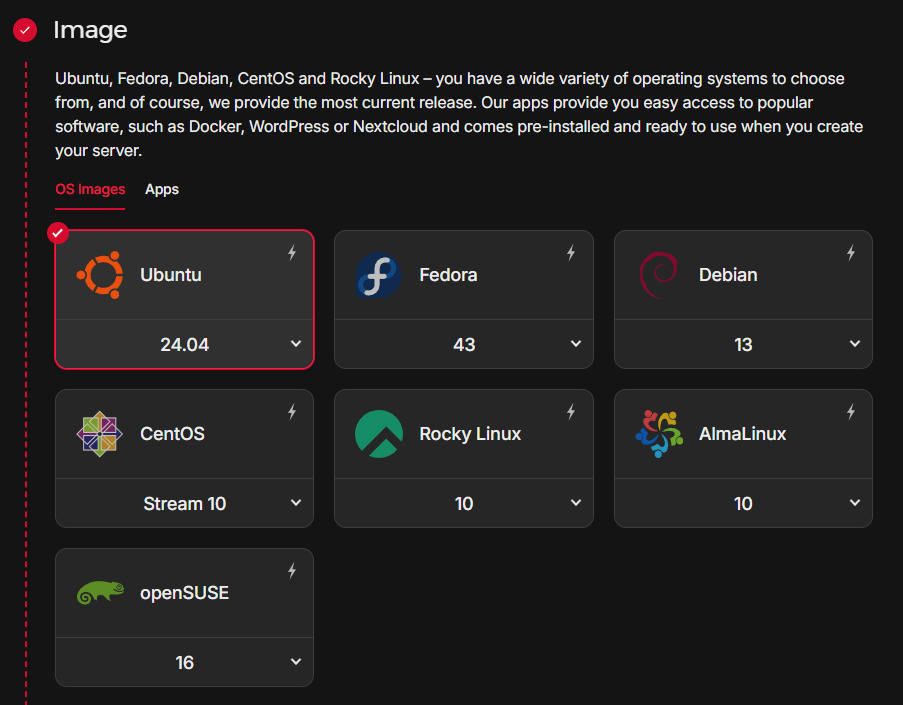

Step 5: Select the OS image

Choose Ubuntu 24.04 LTS. OpenClaw officially supports Ubuntu 22.04 and 24.04. The 24.04 release gives you a newer kernel, updated packages, and security patches through April 2029.

Some operators prefer Debian for its conservative update policy and lighter footprint. Both work, Ubuntu just has broader community support for troubleshooting, and it's what most OpenClaw guides assume.

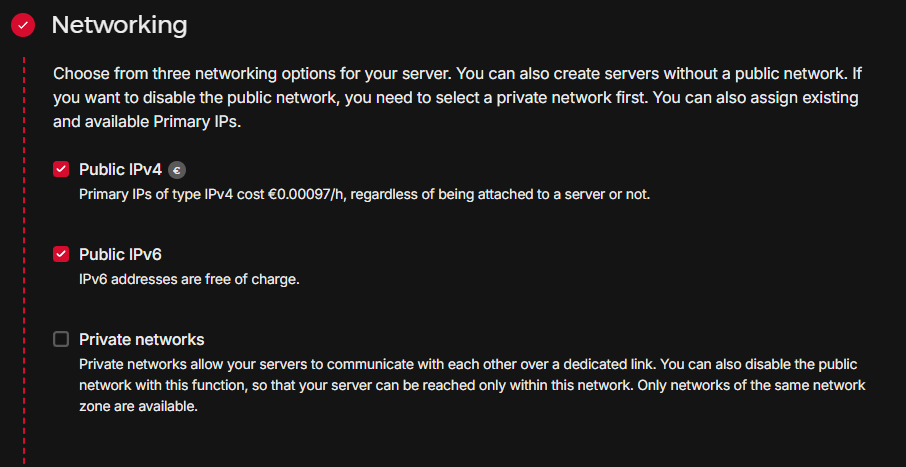

Step 6: Configure networking

Enable both IPv4 and IPv6. The IPv4 address is what you'll use for initial SSH access (before Tailscale takes over). IPv6 is free and future-proofs your setup, some services and APIs are starting to prefer it.

Don't worry about the public IPv4 being exposed right now. In the next section, we'll lock it down so only Tailscale traffic gets through.

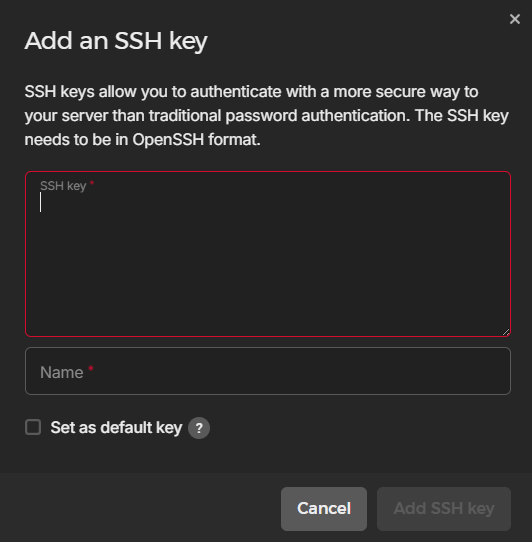

Step 7: Add your SSH key

Paste your public SSH key in the dialog. If you don't have one yet, generate a key pair on your local machine:

# Generate an Ed25519 key (modern, fast, secure)

ssh-keygen -t ed25519 -C "your@email.com"

# Copy the public key to your clipboard

cat ~/.ssh/id_ed25519.pubEd25519 keys are shorter and faster than RSA, and they're the recommended default in 2026. Paste the contents of the .pub file into the Hetzner SSH key dialog. This key is only needed for the initial connection, once Tailscale SSH is configured, it takes over authentication entirely.

Step 8: Skip volumes and firewalls

Leave Volumes and Firewalls empty for now. The 40 GB NVMe included with CX23 is plenty for OpenClaw (which needs around 1-2 GB for the installation plus workspace). We'll handle firewall rules at the OS level with ufw and Tailscale, which gives us more granular control than Hetzner's cloud firewall.

Step 9: Name it and create

Give your server a meaningful name (e.g., openclaw-prod or agent-gateway-01) and click Create & Buy. Provisioning takes 10-30 seconds.

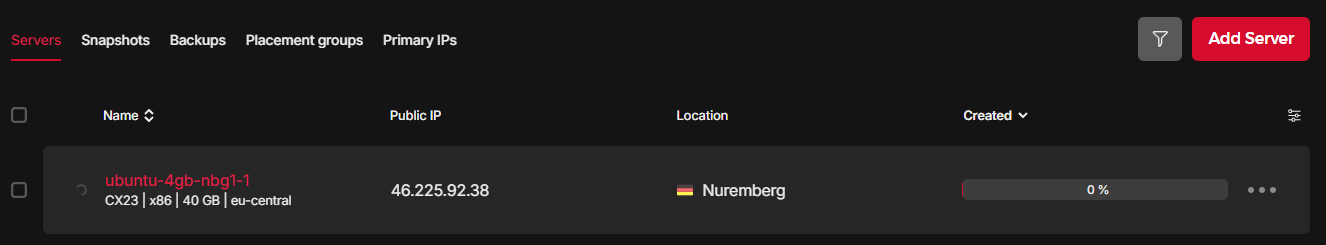

Step 10: Copy the public IP

Once the server status turns green, copy the public IPv4 address. You'll need it for the initial SSH connection. After Tailscale is configured, you'll use the Tailscale IP (a 100.x.x.x address) instead.

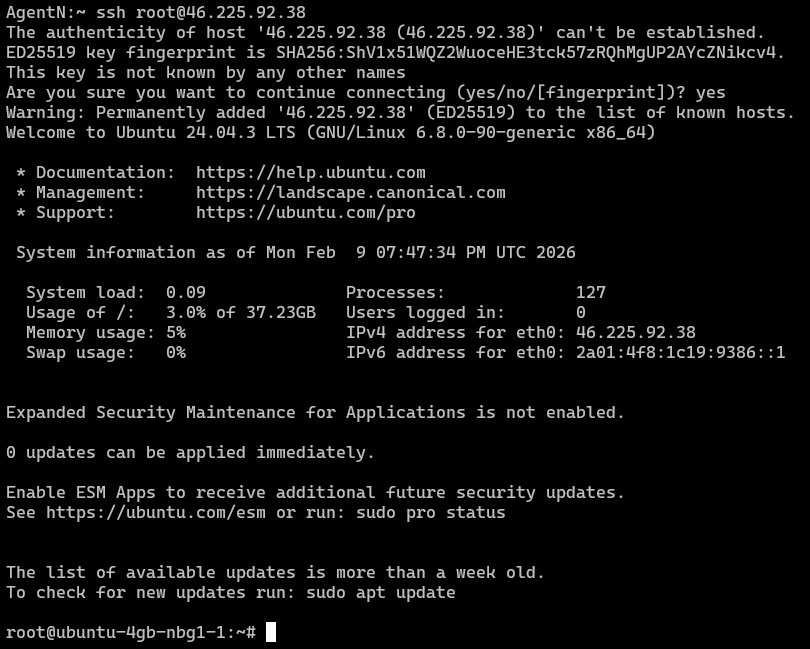

Step 11: SSH for the first time

Open a terminal on your local machine and connect:

ssh root@<PUBLIC_IP>

# Type "yes" when prompted to accept the host fingerprint

If the connection succeeds, you're in. This is the last time you'll SSH over the public internet, by the end of the next section, public SSH access will be completely disabled.

If it fails, double-check that the SSH key you pasted matches the private key on your local machine (~/.ssh/id_ed25519), and that your local IP isn't being blocked by any corporate firewall or VPN.

What we've accomplished

You now have a dedicated Ubuntu server running in Hetzner's datacenter for €3.49/month. It has a public IP, root SSH access, and 40 GB of fast NVMe storage — everything OpenClaw needs. But right now, it's completely exposed on the public internet. Every port is reachable, SSH is listening on port 22, and automated bots will start probing it within hours. Let's fix that.

2. Initial Security Hardening

Lock down before expanding capabilities

Lock down before expanding capabilities

A freshly provisioned VPS is an open target. Bots scan the entire IPv4 address space in under 45 minutes, your server will get its first brute-force SSH attempt within hours of going live. In this section, we'll close every door that doesn't need to be open: install Tailscale to create a private encrypted network, restrict SSH to that network only, disable root login, and create a non-root user for daily operations.

What we're building

By the end of this section, your VPS will have a completely different security posture:

- Before: SSH on port 22 accepting connections from any IP on earth. Root login enabled. Password authentication possible.

- After: SSH only listens on the Tailscale network interface. Root login disabled. Password auth disabled. Only authorized devices on your Tailscale tailnet can reach the server at all.

This is the "zero trust" approach. The server doesn't trust any network. Access is granted per-device, per-user, through an encrypted mesh, not by IP address or network location.

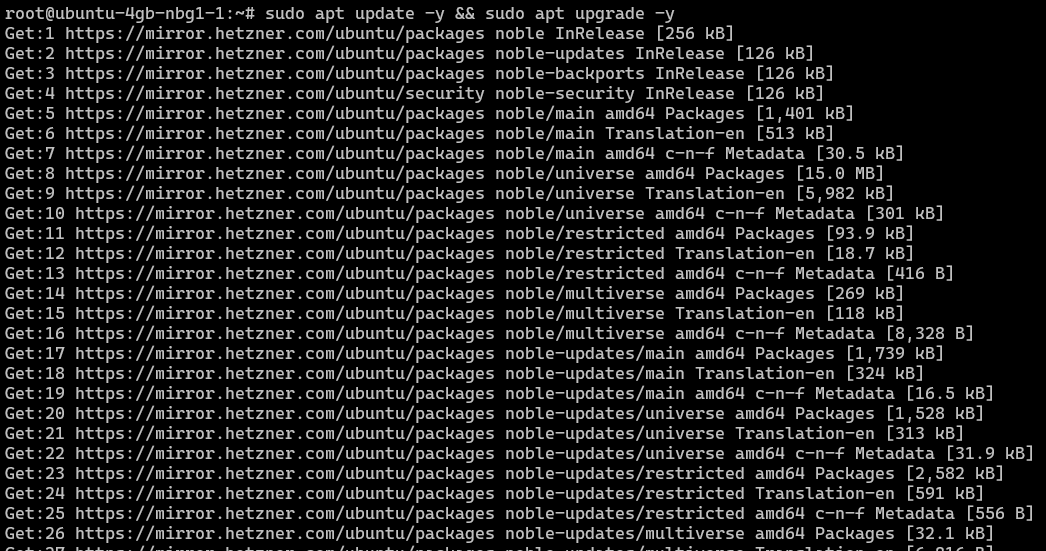

Step 1: Update the system

First things first: pull the latest package lists and upgrade everything. On a fresh Ubuntu install, this patches known CVEs in base packages and ensures your package manager is working correctly.

sudo apt update -y && sudo apt upgrade -y

This may take a minute or two depending on how many packages need updating. If prompted about configuration file changes, accept the default (keep the currently installed version).

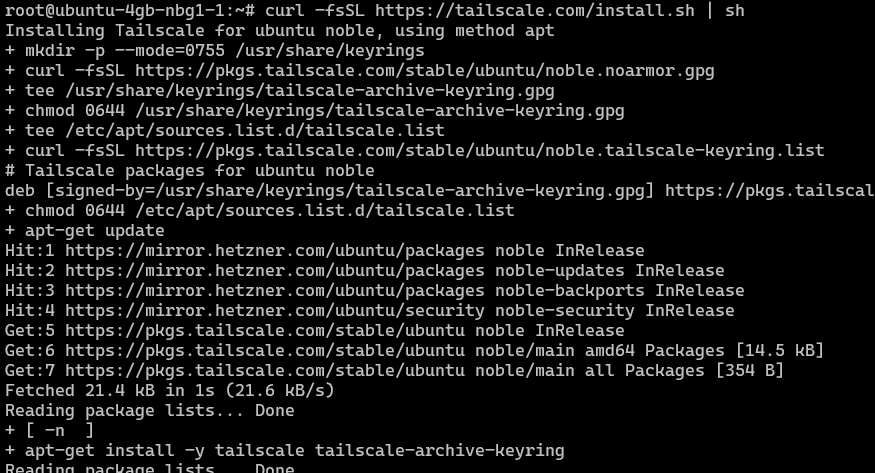

Step 2: Install Tailscale

What is Tailscale and why do we need it?

Tailscale creates a private mesh network (called a "tailnet") between your devices using WireGuard encryption under the hood. Every device on the tailnet gets a stable 100.x.x.x IP address that works regardless of physical network, NAT, or firewall. Traffic between devices is end-to-end encrypted and peer-to-peer, it doesn't route through Tailscale's servers (except for initial coordination).

Think of it as a VPN, but without the VPN server bottleneck. Your laptop talks directly to your VPS through an encrypted tunnel, and that tunnel works from your home Wi-Fi, a coffee shop, a hotel, or a corporate network, no port forwarding or firewall exceptions required.

For our setup, Tailscale replaces traditional SSH key management entirely. With --ssh enabled, Tailscale handles authentication and authorization for SSH connections. You don't need to manage authorized_keys files, rotate SSH keys, or worry about compromised keys, access is controlled through Tailscale's admin console.

Install the Tailscale client on your VPS:

curl -fsSL https://tailscale.com/install.sh | sh

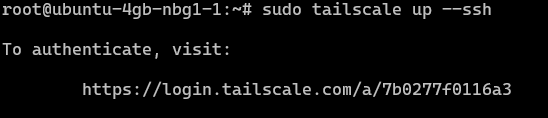

Step 3: Connect the VPS to your tailnet

Start Tailscale with SSH mode enabled. The --ssh flag tells Tailscale to accept SSH connections directly, using your Tailscale identity instead of SSH keys:

sudo tailscale up --ssh

Tailscale will print a URL. Open it in your browser to authenticate. This links the VPS to your Tailscale account, which means only devices you've authorized on the same tailnet can connect to it.

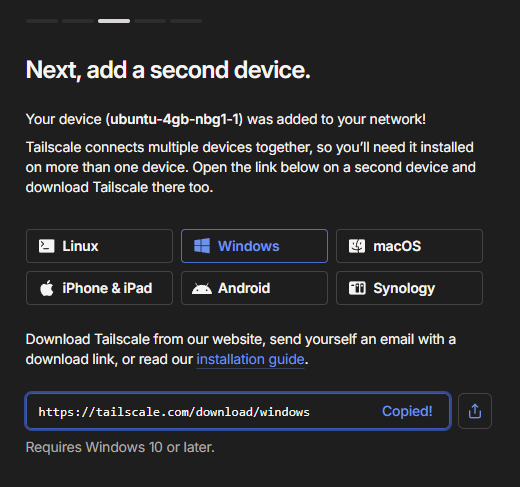

Step 4: Install Tailscale on your local machine

For the private network to work, both ends need Tailscale. Download and install Tailscale on the machine you'll manage the VPS from, e.g. your laptop, desktop, or workstation. Tailscale supports macOS, Windows, Linux, iOS, and Android.

Sign in to the same Tailscale account you used for the VPS:

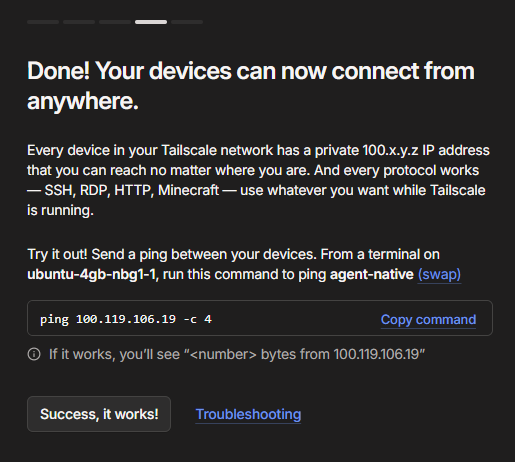

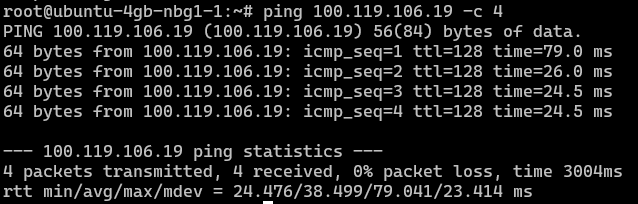

Step 5: Verify the mesh connection

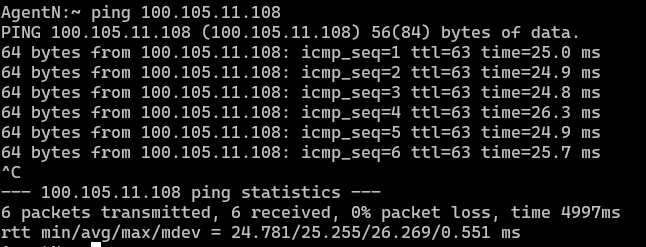

Once both devices are connected, you can ping one from the other using their Tailscale IPs. This confirms the encrypted tunnel is working.

Ping your local device from the VPS:

Now ping the VPS from your local machine:

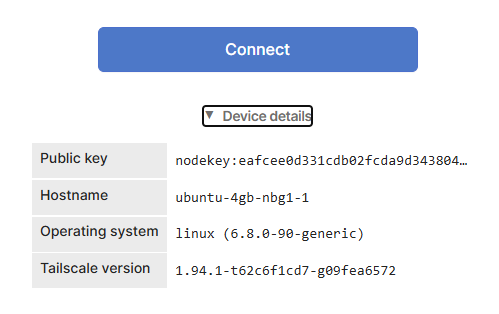

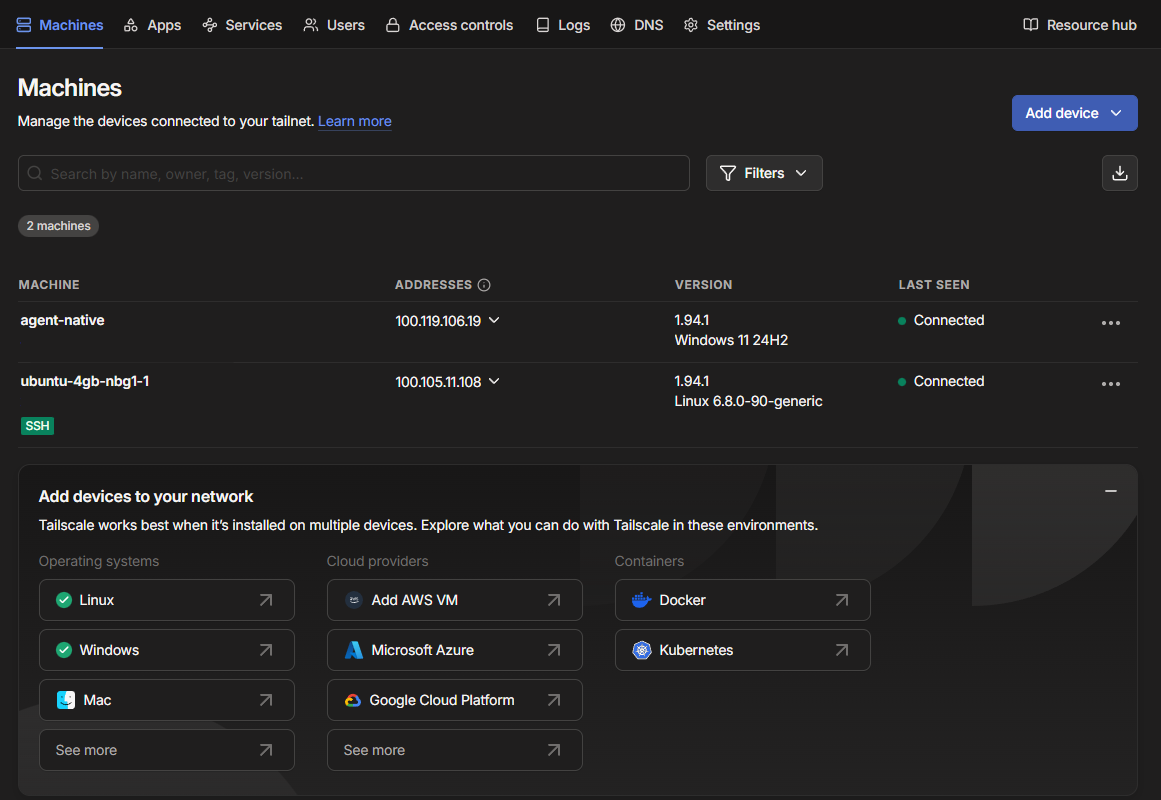

You should see transmitted packets with low latency. If pings fail, check that both devices show as "Connected" in the Tailscale admin console:

The admin console at login.tailscale.com/admin/machines shows all devices on your tailnet, their Tailscale IPs, connection status, and last-seen timestamps. Bookmark this, you'll use it whenever you add a new device or debug connectivity issues.

Step 6: Harden SSH configuration

Now the critical part: we tell the SSH daemon to only listen on the Tailscale network interface. This means SSH becomes completely invisible on the public internet, even a port scan of your public IP won't find it.

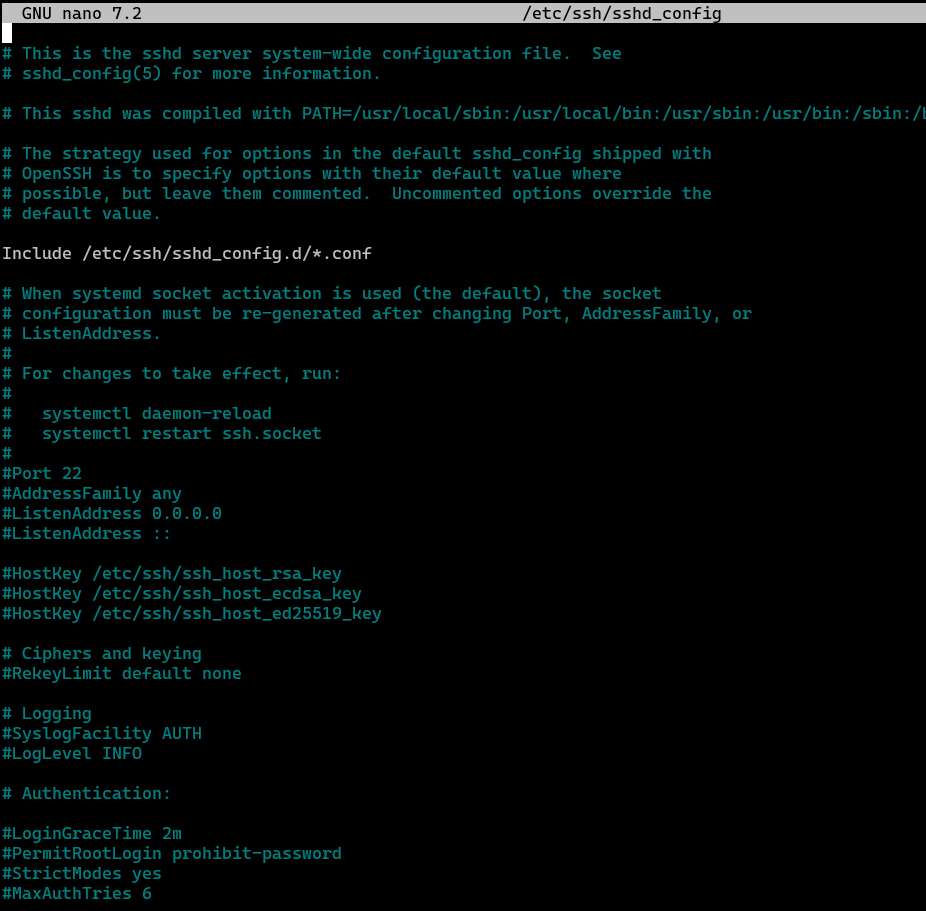

Open the SSH configuration file in your VPS terminal:

nano /etc/ssh/sshd_config

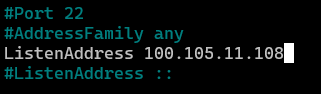

Find the ListenAddress line (it's usually commented out). Uncomment it and set it to your VPS's Tailscale IP. You can find this IP in the Tailscale admin console, it'll be something like 100.105.11.108.

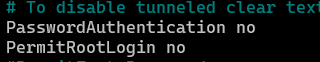

Then set these two additional options to disable password authentication and root login:

# Only listen on the Tailscale interface — not the public IP

ListenAddress 100.x.x.x # Replace with YOUR VPS Tailscale IP

# Disable password authentication — keys or Tailscale SSH only

PasswordAuthentication no

# Disable root login — use your non-root user (created next)

PermitRootLogin no

Why each setting matters

- ListenAddress: Binds SSH to the Tailscale interface only. Anyone scanning your public IP will see port 22 as closed. This is the single most effective hardening step, it removes SSH from the public attack surface entirely.

- PasswordAuthentication no: Even if an attacker somehow reaches SSH, they can't brute-force passwords. Only key-based or Tailscale SSH authentication works.

- PermitRootLogin no: Forces the use of a regular user account, so even a compromised session doesn't have root privileges by default. You'll use

sudowhen you need elevated access.

Save with Ctrl + S and exit with Ctrl + X.

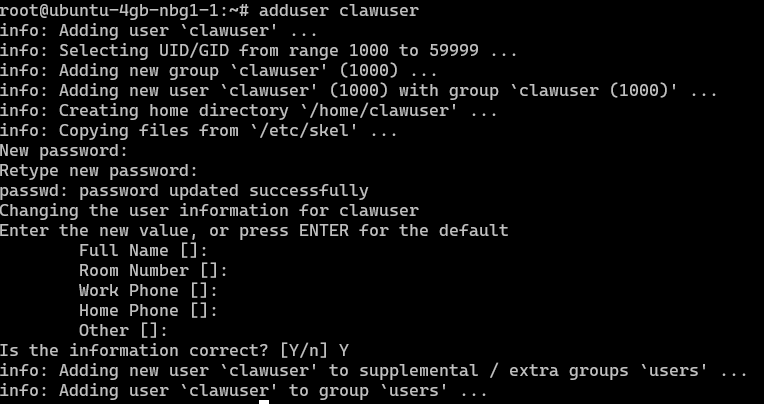

Step 7: Create a non-root user

Running everything as root is a security anti-pattern. If an agent or tool gets compromised, root access means the attacker owns the entire machine. A dedicated non-root user limits the blast radius and gives you an audit trail of privileged actions through sudo.

# Create a new user — pick any name (we use "clawuser")

adduser clawuser

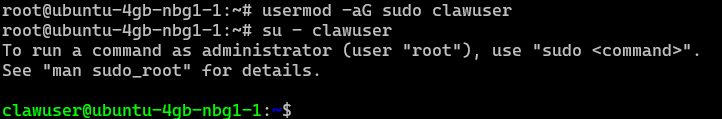

# Grant sudo privileges

usermod -aG sudo clawuser

# Switch to the new user to test

su - clawuser

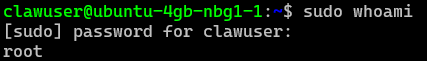

# Verify sudo works

sudo whoami

# Should output: root

Enter a password and accept the default values for the user info prompts. The password is a fallback, primary authentication will be through Tailscale SSH.

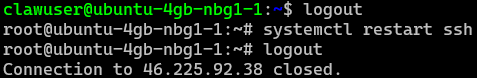

Step 8: Restart SSH and verify the new access flow

This is the moment of truth. We'll restart the SSH daemon with the new configuration, then verify that:

- Root SSH over the public IP fails (expected, we disabled both)

- SSH as

clawuserover the Tailscale IP succeeds

# Exit back to root, restart SSH, then disconnect

logout # back to root

systemctl restart ssh

logout # disconnect from VPS

Now from your local machine (with Tailscale running), try connecting as root, this should fail:

# This should FAIL — root login is disabled

ssh root@100.105.11.108Then try connecting as your new user, this should succeed:

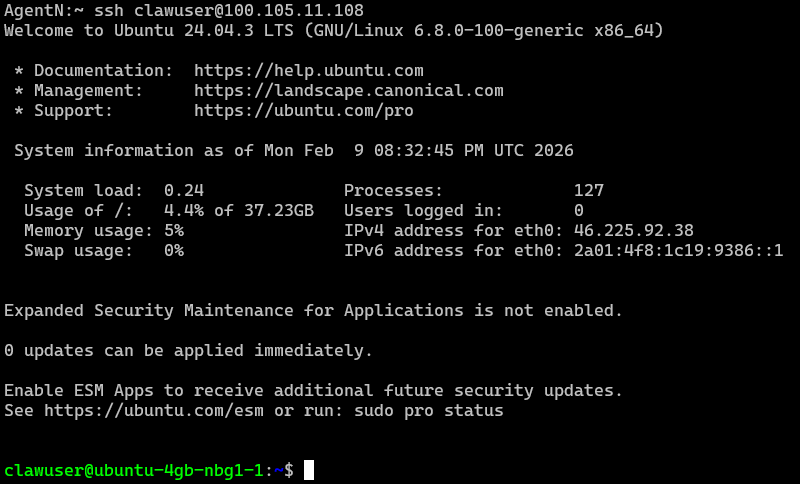

# This should SUCCEED — non-root user over Tailscale

ssh clawuser@100.105.11.108

If you need to access the server from another device (phone, a second laptop, a CI runner), install Tailscale on that device and connect it to the same tailnet. No SSH key copying needed, Tailscale handles the authentication.

You may still be able to ping the public IP at this stage. That's fine, ping doesn't give an attacker much. We'll close this completely with ufw firewall rules after OpenClaw is installed, in the Firewall & Gateway section.

Security checkpoint

Your VPS is now significantly hardened. SSH only works over Tailscale, root login is disabled, and password authentication is off. The public IP still responds to pings, but there's no open port for an attacker to exploit. In the next section, we'll install OpenClaw on this locked-down server.

3. Install and Onboard OpenClaw

From zero to running agent gateway in five minutes

Manual onboarding with OpenAI auth

OpenClaw is a local-first AI automation framework that provides a CLI, a secure local gateway (port 18789), a web dashboard, and plugin/agent support. This section walks through installation, the onboarding wizard, and connecting your first model provider. The entire process takes about five minutes.

System requirements

Before installing, make sure your server meets the minimum requirements:

- OS: Ubuntu 22.04 or 24.04 (server or desktop edition)

- Node.js: Version 22 or newer (the installer handles this automatically)

- CPU: 4 vCPUs minimum

- RAM: 8 GB recommended for cloud models. 32 GB+ if you plan to run local models through Ollama

- Disk: 20 GB minimum. 100 GB+ if pulling local model weights

- Network: Internet connectivity for installation and API calls to model providers

Our Hetzner CX23 (2 vCPUs, 4 GB RAM, 40 GB NVMe) is fine for running OpenClaw with cloud-hosted models. The gateway itself is lightweight. If you need local models, upgrade to CX33 or larger as described in the VPS section.

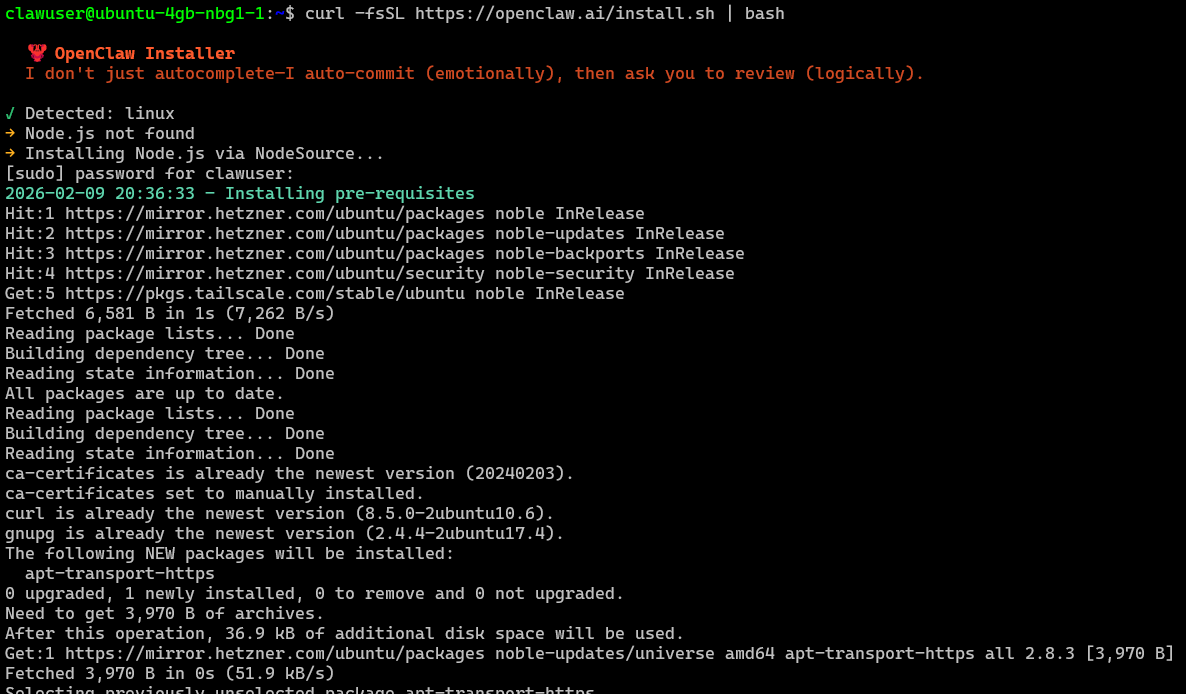

Step 1: Run the installer

OpenClaw provides multiple installation methods (npm, pnpm, Git, Docker, Podman, Nix, Ansible), but the one-liner installer script is the fastest path. It detects your Node.js version, installs it if missing, installs the OpenClaw CLI globally, and launches the onboarding wizard:

curl -fsSL https://openclaw.ai/install.sh | bash

The script handles everything: Node detection, npm global configuration, CLI installation, and daemon setup. If you prefer more control, you can also install manually:

# Option A: npm (requires Node 22+ already installed)

npm install -g openclaw@latest

openclaw onboard --install-daemon

# Option B: Install from source (for contributors)

git clone https://github.com/openclaw/openclaw.git

cd openclaw

pnpm install && pnpm ui:build && pnpm build

pnpm link --globalVerify the installation worked:

openclaw --version

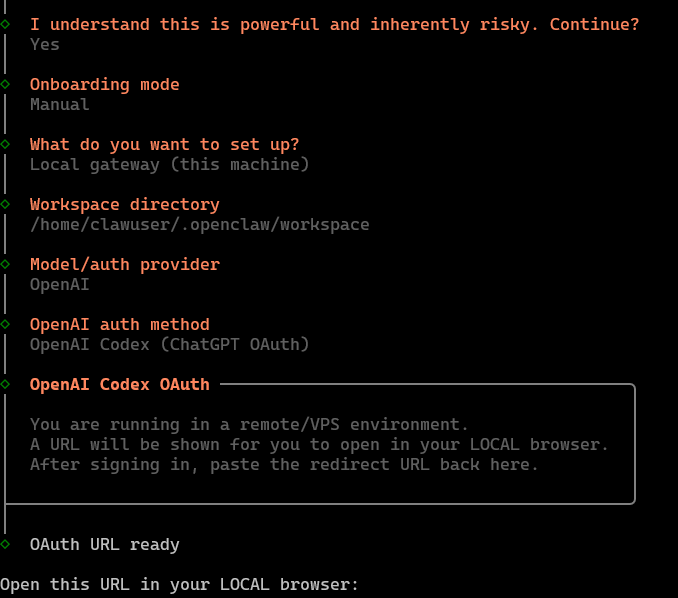

# Should output: OpenClaw 2026.x.xStep 2: The onboarding wizard

If you used the installer script, the onboarding wizard launches automatically. If you installed manually, start it with:

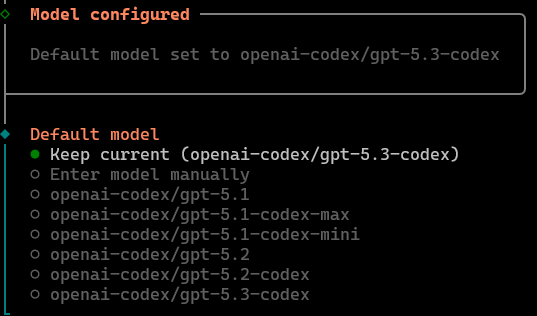

openclaw onboard --install-daemonThe wizard walks you through four configuration decisions:

- Gateway Location: Select

Local. This runs the gateway on the same machine, listening onlocalhost:18789. - Gateway Auth: Choose how the CLI authenticates with the gateway. Options include

Token (recommended),Password, orNone(for development only). - Model Provider: Select your LLM provider. Options include

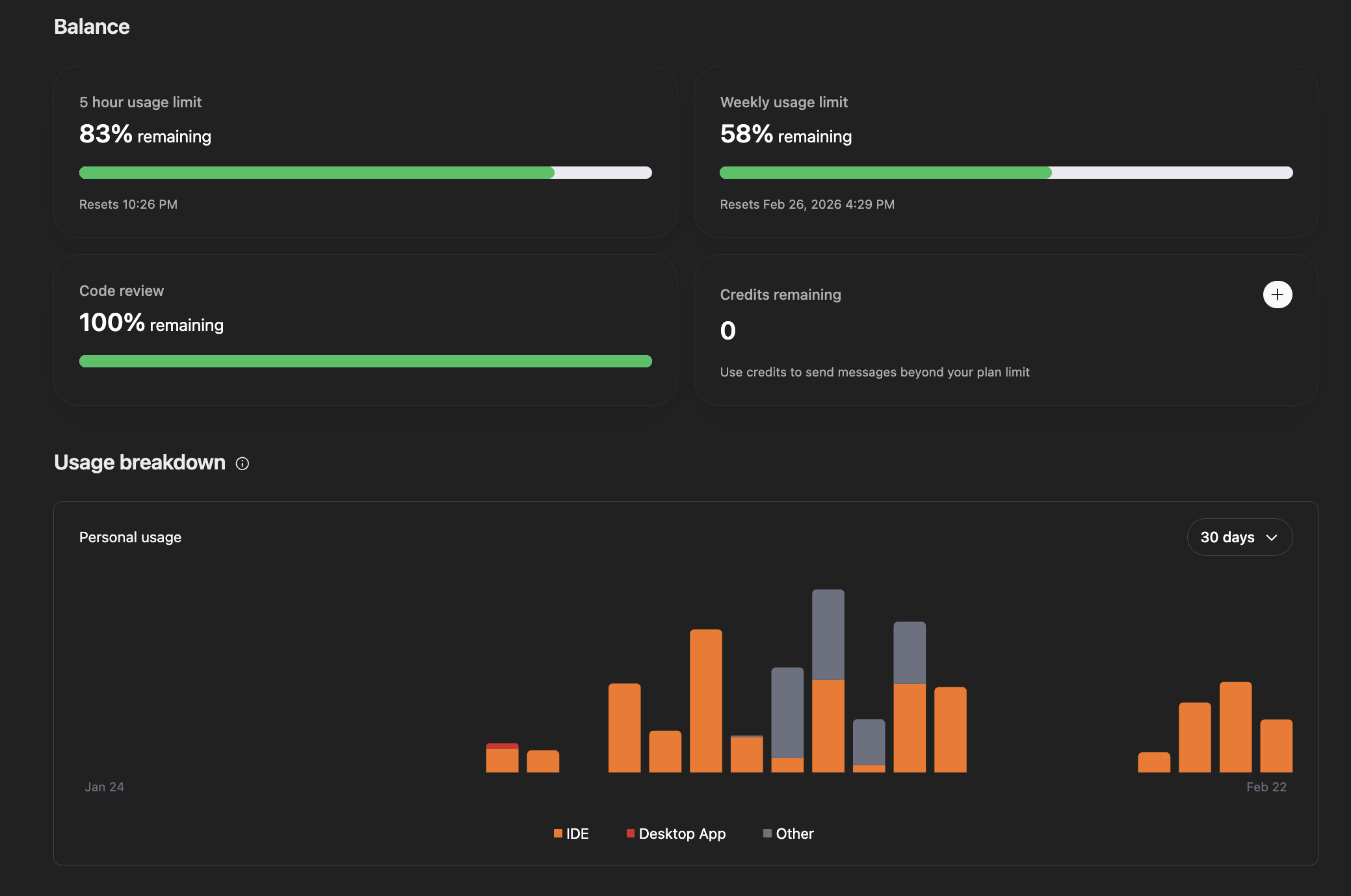

OpenAI (OAuth),Anthropic (API key),Ollama (local models), and others. - Primary Model: Choose which model to use by default (e.g.,

gpt-4o,claude-sonnet-4-5,ollama/qwen3:32b).

For this walkthrough, we'll use OpenAI OAuth, which lets you leverage your existing ChatGPT subscription (including Codex access) without managing API keys separately.

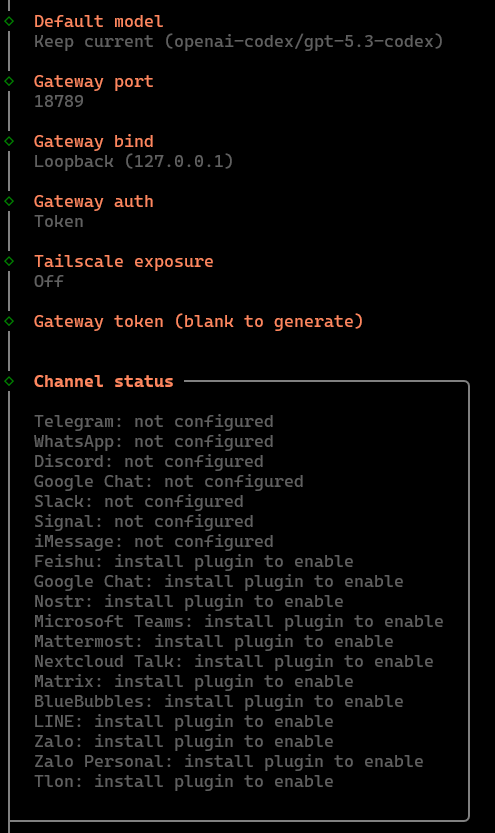

Step 3: Authenticate with OpenAI OAuth

When you select OpenAI OAuth, the wizard displays a URL. Open this URL in your browser and sign in with your OpenAI account:

After signing in, your browser will redirect to a URL containing an authorization code. Copy this entire redirect URL (not just the code) and paste it back into the OpenClaw terminal:

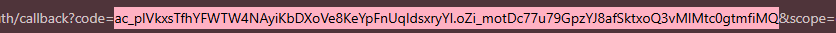

This OAuth flow links your OpenAI account to the OpenClaw gateway. Your agent will use your subscription credits for inference. You can monitor usage at:

https://chatgpt.com/codex/settings/usage

Other authentication methods

OpenAI OAuth is just one option. Here's when you'd pick the alternatives:

- Anthropic (API key): If you prefer Claude models. Paste your API key directly, no OAuth flow needed. Set spend limits in the Anthropic console to cap maximum spend.

- Ollama (local): For fully self-hosted inference. No API keys, no cloud dependency, no per-token costs. Requires more RAM (32 GB+) and a capable GPU for good performance. Good for privacy-sensitive workloads.

- OpenRouter / other providers: For accessing multiple model providers through a single API key. Useful if you want to switch between models without reconfiguring.

If you use API keys (for any provider), always set spend limits on the provider's dashboard to prevent unexpected bills from a runaway agent loop.

Step 4: Complete the remaining configuration

The wizard continues with model selection and channel configuration. Follow the prompts to select your default model and configure initial messaging channels:

Once the wizard completes, OpenClaw writes its configuration to ~/.openclaw/openclaw.json and starts the gateway daemon. The gateway listens on localhost:18789 by default, it's only reachable from the server itself (or through Tailscale if you configure forwarding later).

Verify everything is running:

# Check gateway status

systemctl --user status openclaw-gateway

# Quick connectivity test

curl -s http://localhost:18789/health

# View your configuration

cat ~/.openclaw/openclaw.jsonWhere things live

OpenClaw keeps everything under your home directory:

~/.openclaw/openclaw.json: Main configuration file (gateway auth, model providers, channel settings, tool profiles)~/.openclaw/: All state, logs, skill data, and session history- Gateway port:

18789on localhost - Skills directory:

~/.openclaw/skills(managed) or<workspace>/skills(per-workspace, highest precedence)

File permissions matter: openclaw.json should be 600 (user read/write only) and the ~/.openclaw/ directory should be 700.

The installer sets these correctly, but it's worth verifying after any manual edits:

# Verify file permissions are secure

ls -la ~/.openclaw/openclaw.json

# Should show: -rw------- (600)

# Fix if needed

chmod 600 ~/.openclaw/openclaw.json

chmod 700 ~/.openclawWhat we've accomplished

OpenClaw is installed, authenticated with your model provider, and the gateway daemon is running. The agent can now accept instructions through the CLI and will soon be connected to messaging channels like Telegram and WhatsApp. In the next section, we'll set up the Telegram bot channel — your first external interface to the agent.

4. Hardening Audit Pack

Lock down your OpenClaw deployment with automated security audits, hardened configs, Docker isolation, and emergency procedures.

Running an AI agent on a VPS that responds to WhatsApp and Telegram is powerful but it comes with a real attack surface. A misconfigured DM policy, an exposed gateway port, or an overly permissive tool profile can turn your assistant into a liability. This section walks through the full hardening stack: the built-in audit tool, a production-ready baseline config, Docker sandboxing, network egress filtering, credential brokering, and what to do when something goes wrong.